1

LECTURE NOTES

ON

CLOUD COMPUTING

2

UNIT-I

SYSTEM MODELING, CLUSTERING AND

VIRTUALIZATION:

1.

DISTRIBUTED SYSTEM MODELS AND ENABLING

TECHNOLOGIES

The Age of Internet Computing

Billions of people use the Internet every day. As a result,

supercomputer sites and large data centers must provide high-

performance computing services to huge numbers of Internet

users concurrently. Because of this high demand, the Linpack

Benchmark for high-performance computing (HPC)

applications is no longer optimal for measuring system

performance.

The emergence of computing clouds instead demands high-

throughput computing (HTC) systems built with parallel and

distributed computing technologies . We have to upgrade data

centers using fast servers, storage systems, and high- bandwidth

networks. The purpose is to advance network-based computing

and web services with the emerging new technologies.

The Platform Evolution

Computer technology has gone through five generations of

development, with each generation lasting from 10 to 20 years.

Successive generations are overlapped in about 10 years. For

instance, from 1950 to 1970, a handful of mainframes, including

the IBM 360 and CDC 6400, were built to satisfy the demands

of large businesses and government organizations.

3

From 1960 to 1980, lower-cost mini- computers such as the

DEC PDP 11 and VAX Series became popular among small

businesses and on college campuses.

From 1970 to 1990, we saw widespread use of personal

computers built with VLSI microproces- sors. From 1980 to

2000, massive numbers of portable computers and pervasive

devices appeared in both wired and wireless applications. Since

1990, the use of both HPC and HTC systems hidden in.

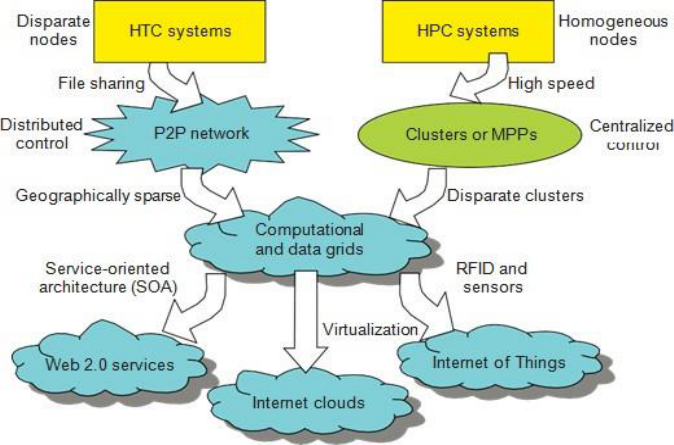

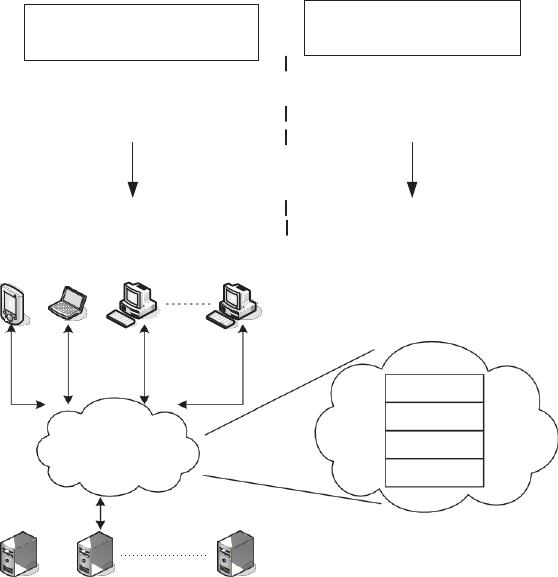

Fig 1. Evolutionary trend toward parallel, distributed, and cloud

computing with clusters, MPPs, P2P networks, grids, clouds, web services,

and the Internet of Things.

High-Performance Computing

For many years, HPC systems emphasize the raw speed

performance. The speed of HPC systems has increased from

Gflops in the early 1990s to now Pflops in 2010. This

4

improvement was driven mainly by the demands from

scientific, engineering, and manufacturing communities.

For example,the Top 500 most powerful computer systems in the

world are measured by floating-point speed in Linpack

benchmark results. However, the number of supercomputer users

is limited to less than 10% of all computer users.

Today, the majority of computer users are using desktop

computers or large servers when they conduct Internet searches

and market-driven computing tasks.

High-Throughput Computing

The development of market-oriented high-end computing

systems is undergoing a strategic change from an HPC paradigm

to an HTC paradigm. This HTC paradigm pays more attention to

high-flux computing.

The main application for high-flux computing is in Internet

searches and web services by millions or more users

simultaneously. The performance goal thus shifts to measure high

throughput or the number of tasks completed per unit of time.

HTC technology needs to not only improve in terms of batch

processing speed, but also address the acute problems of cost,

energy savings, security, and reliability at many data and

enterprise computing centers. This book will address both HPC

and HTC systems to meet the demands of all computer users.

Three New Computing Paradigms

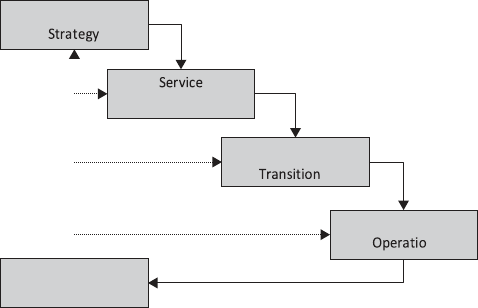

A Figure 1. illustrates, with the introduction of SOA, Web 2.0

services become available. Advances in virtualization make it

possible to see the growth of Internet clouds as a new computing

paradigm. The maturity of radio-frequency identification

(RFID), Global Positioning System (GPS), and sensor

technologies has triggered the development of the Internet of

Things (IoT).

5

Computing Paradigm Distinctions

. The high-technology community has argued for many years

about the precise definitions of centralized computing, parallel

computing, distributed computing, and cloud computing. In

general, distributed computing is the opposite of centralized

computing. The field of parallel computing overlaps with

distributed computing to a great extent, and cloud computing

overlaps with distributed, centralized, and parallel computing.

The following list defines these terms more clearly; their

architectural and operational differences are discussed further in

subsequent chapters.

Centralized computing. This is a computing paradigm by which

all computer resources are centralized in one physical system. All

resources (processors, memory, and storage) are fully shared and

tightly coupled within one integrated OS. Many data centers and

supercomputers are centralized systems, but they are used in

parallel, distributed, and cloud computing applications

Parallel computing In parallel computing, all processors are

either tightly coupled with centralized shared memory or loosely

coupled with distributed memory. Some authors refer to this

discipline as parallel processing. Interprocessor communication

is accomplished through shared memory or via message passing.

A computer system capable of parallel computing is commonly

known as a parallel computer . Programs running in a parallel

computer are called parallel programs. The process of writing

parallel programs is often referred to as parallel programming.

Distributed computing This is a field of computer

science/engineering that studies distributed systems. A

distributed system consists of multiple autonomous computers,

each having its own private memory, communicating through a

computer network. Information exchange in a distributed

6

system is accomplished through message passing. A computer

program that runs in a distributed system is known as a distributed

program. The process of writing distributed programs is referred

to as distributed programming.

Cloud computing An Internet cloud of resources can be either a

centralized or a distributed computing system. The cloud applies

parallel or distributed computing, or both. Clouds can be built

with physical or virtualized resources over large data centers that

are centralized or distributed. Some authors consider cloud

computing to be a form of utility computing or service computing

2.

COMPUTER CLUSTERS FOR SCALABLE PARALLEL

COMPUTING

Technologies for network-based systems

With the concept of scalable computing under our belt, it‘s time

to explore hardware, software, and network technologies for

distributed computing system design and applications. In

particular, we will focus on viable approaches to building

distributed operating systems for handling massive parallelism in

a distributed environment.

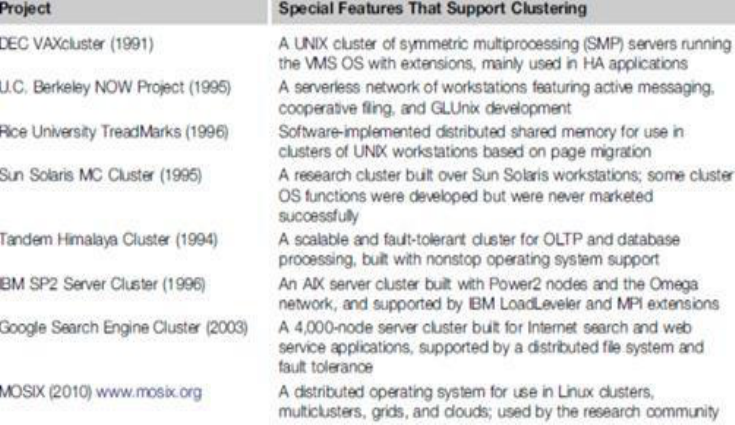

Cluster Development Trends Milestone Cluster Systems

Clustering has been a hot research challenge in computer

architecture. Fast communication, job scheduling, SSI, and HA

are active areas in cluster research. Table 2.1 lists some milestone

cluster research projects and commercial cluster products. Details

of these old clusters can be found in

7

TABLE 2.1:MILE STONE CLUSTER RESEARCH PROJECTS

Fundamental Cluster Design Issues

Scalable Performance: This refers to the fact that scaling of

resources (cluster nodes, memory capacity, I/O bandwidth, etc.)

leads to a proportional increase in performance. Of course, both

scale-up and scale down capabilities are needed, depending on

application demand or cost effectiveness considerations.

Clustering is driven by scalability.

Single-System Image (SSI): A set of workstations connected by

an Ethernet network is not necessarily a cluster. A cluster is a

single system. For example, suppose a workstation has a 300

Mflops/second processor, 512 MB of memory, and a 4 GB disk

and can support 50 active users and 1,000 processes.

By clustering 100 such workstations, can we get a single system

that is equivalent to one huge workstation, or a mega-station, that

has a 30 Gflops/second processor, 50 GB of memory, and a

8

400 GB disk and can support 5,000 active users and 100,000

processes? SSI techniques are aimed at achieving this goal.

Internode Communication: Because of their higher node

complexity, cluster nodes cannot be packaged as compactly as

MPP nodes. The internode physical wire lengths are longer in a

cluster than in an MPP. This is true even for centralized clusters.

A long wire implies greater interconnect network latency. But

more importantly, longer wires have more problems in terms of

reliability, clock skew, and cross talking. These problems call for

reliable and secure communication protocols, which increase

overhead. Clusters often use commodity networks (e.g., Ethernet)

with standard protocols such as TCP/IP.

Fault Tolerance and Recovery: Clusters of machines can be

designed to eliminate all single points of failure. Through

redundancy, a cluster can tolerate faulty conditions up to a certain

extent.

Heartbeat mechanisms can be installed to monitor the running

condition of all nodes. In case of a node failure, critical jobs

running on the failing nodes can be saved by failing over to the

surviving node machines. Rollback recovery schemes restore the

computing results through periodic check pointing.

9

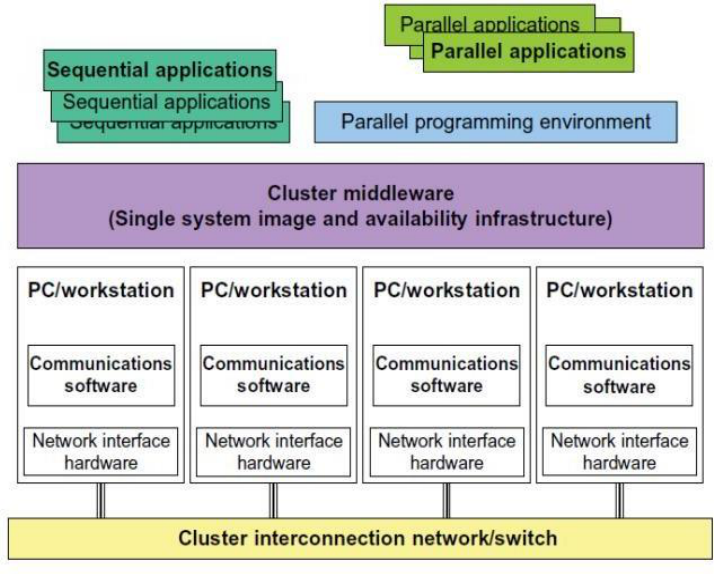

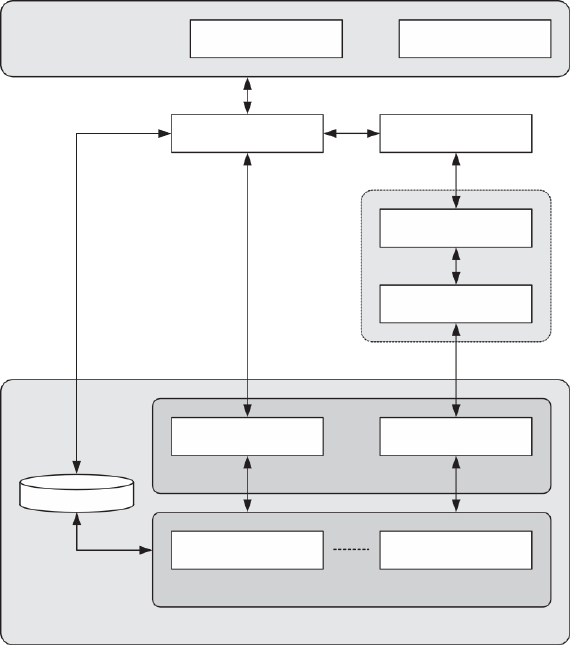

Fig2.1: Architecture of Computer Cluster

3.

IMPLEMENTATION LEVELS OF VIRTUALIZATION

Virtualization is a computer architecture technology by which

multiple virtual machines (VMs) are multiplexed in the same

hardware machine. The idea of VMs can be dated back to the

1960s . The purpose of a VM is to enhance resource sharing by

many users and improve computer performance in terms of

resource utilization and application flexibility.

Hardware resources (CPU, memory, I/O devices, etc.) or software

resources (operating system and software libraries) can be

virtualized in various functional layers. This virtualization

technology has been revitalized as the demand for distributed and

cloud computing increased sharply in recent years .

10

The idea is to separate the hardware from the software to yield

better system efficiency. For example, computer users gained

access to much enlarged memory space when the concept of

virtual memory was introduced. Similarly, virtualization

techniques can be applied to enhance the use of compute engines,

networks, and storage. In this chapter we will discuss VMs and

their applications for building distributed systems. According to

a 2009 Gartner Report, virtualization was the top strategic

technology poised to change the computer industry. With

sufficient storage, any computer platform can be installed in

another host computer, even if they use proc.

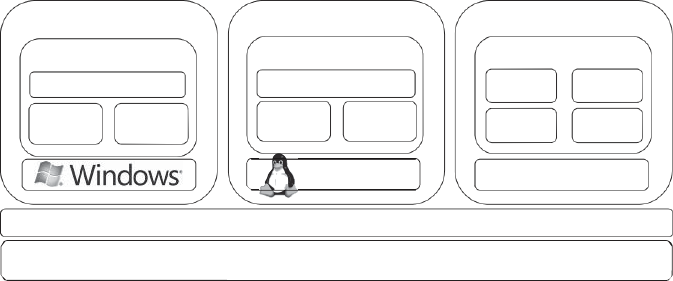

Levels of Virtualization Implementation

A traditional computer runs with a host operating system

specially tailored for its hardware architecture ,After

virtualization, different user applications managed by their own

operating systems (guest OS) can run on the same hardware,

independent of the host OS.

This is often done by adding additional software, called a

virtualization layer .This virtualization layer is known as

hypervisor or virtual machine monitor (VMM). The VMs are

shown in the upper boxes, where applications run with their own

guest OS over the virtualized CPU, memory, and I/O resources.

The main function of the software layer for virtualization is to

virtualize the physical hardware of a host machine into virtual

resources to be used by the VMs, exclusively. This can be

implemented at various operational levels, as we will discuss

shortly.

The virtualization software creates the abstraction of VMs by

interposing a virtualization layer at various levels of a computer

11

system. Common virtualization layers include the instruction set

architecture (ISA) level, hardware level, operating system level,

library support level, and application level.

12

UNIT – II

FOUNDATIONS

1.

INTRODUCTION TO CLOUD COMPUTING

Cloud is a parallel and distributed computing system

consisting of a collection of inter-connected and virtualized

computers that are dynamically provisioned and presented as

one or more unified computing resources based on service-

level agreements (SLA) established through negotiation

between the service provider and consumers.

Clouds are a large pool of easily usable and accessible

virtualized resources (such as hardware, development

platforms and/or services). These resources can be

dynamically reconfigured to adjust to a variable load (scale),

allowing also for an optimum resource utilization. This pool

of resources is typically exploited by a pay-per- use model in

which guarantees are offered by the Infrastructure Provider

by means of customized Service Level Agreements.

ROOTS OF CLOUD COMPUTING

The roots of clouds computing by observing the advancement

of several technologies, especially in hardware

(virtualization, multi-core chips), Internet technologies (Web

services, service-oriented architectures, Web 2.0), distributed

computing (clusters, grids), and systems management

(autonomic computing, data center automation).

From Mainframes to Clouds

We are currently experiencing a switch in the IT world, from

in-house generated computing power into utility-

13

supplied computing resources delivered over the Internet as

Web services. This trend is similar to what occurred about a

century ago when factories, which used to generate their own

electric power, realized that it is was cheaper just plugging

their machines into the newly formed electric power grid.

Computing

delivered

as

a

utility

can

be

defined

as

―

on

demand delivery of infrastructure, applications, and business

processes in a security-rich, shared, scalable, and based

computer environment over the Internet for a fee‖.

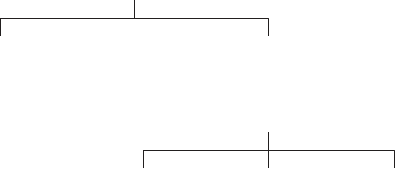

Hardware

Systems Management

FIGURE 1.1. Convergence of various advances

leading to the advent of cloud computing.

Internet Technologies

Distributed Computing

Hardware Virtualization

Utility &

SOA

Cloud

Grid

Web 2.0

Web Services

Autonomic Computing

14

This model brings benefits to both consumers and providers

of IT services. Consumers can attain reduction on IT-related

costs by choosing to obtain cheaper services from external

providers as opposed to heavily investing on IT infrastructure

and personnel hiring. The

―

on-demand

‖

component

of

thi

s

model

allo

ws

con

s

umer

s

to adapt their IT usage to rapidly increasing or unpredictable

computing needs.

Providers of IT services achieve better operational costs;

hardware and software infrastructures are built to provide

multiple solutions and serve many users, thus increasing

efficiency and ultimately leading to faster return on

investment (ROI) as well as lower total cost of ownership

(TCO).

The mainframe era collapsed with the advent of fast and

inexpensive microprocessors and IT data centers moved to

collections of commodity servers. Apart from its clear

advantages, this new model inevitably led to isolation of

workload into dedicated servers, mainly due to

incompatibilities

Between software stacks and operating systems.

These facts reveal the potential of delivering computing

services with the speed and reliability that businesses enjoy

with their local machines. The benefits of economies of

scale and high utilization allow providers to offer

computing services for a fraction of what it costs for a typical

company that generates its own computing power.

SOA, WEB SERVICES, WEB 2.0, AND MASHUPS

The emergence of Web services (WS) open standards has

significantly con-tributed to advances in the domain of

15

software integration. Web services can glue together

applications running on different messaging product plat-

forms, enabling information from one application to be made

available to others, and enabling internal applications to be

made available over the Internet.

Over the years a rich WS software stack has been specified

and standardized, resulting in a multitude of technologies to

describe, compose, and orchestrate services, package and

transport messages between services, publish and dis- cover

services, represent quality of service (QoS) parameters, and

ensure security in service access.

WS standards have been created on top of existing ubiquitous

technologies such as HTTP and XML, thus providing a

common mechanism for delivering services, making them

ideal for implementing a service-oriented architecture

(SOA).

The purpose of a SOA is to address requirements of loosely

coupled, standards-based, and protocol- independent

distributed computing. In a SOA, software

res

ource

s

are

packaged

as

―

services

,‖

whi

ch

are

we

ll- defined, self-

contained modules that provide standard business

functionality and are independent of the state or context of

other services. Services are described in a standard definition

language and have a published interface.

The maturity of WS has enabled the creation of powerful

services that can be accessed on-demand, in a uniform way.

While some WS are published with the intent of serving end-

user applications, their true power resides in its interface

being accessible by other services. An enterprise application

that follows the SOA paradigm is a collection of services that

together perform complex business logic.

In the consumer Web, information and services may be

16

programmatically aggregated, acting as building blocks of

complex compositions, called service mashups. Many

service providers, such as Amazon, del.icio.us, Facebook,

and Google, make their service APIs publicly accessible

using standard protocols such as SOAP and REST.

In the Software as a Service (SaaS) domain, cloud

applications can be built as compositions of other services

from the same or different providers. Services such user

authentication, e-mail, payroll management, and calendars

are examples of building blocks that can be reused and

combined in a business solution in case a single, ready- made

system does not provide all those features. Many building

blocks and solutions are now available in public

marketplaces.

For example, Programmable Web is a public repository of

service APIs and mashups currently listing thousands of APIs

and mashups. Popular APIs such as Google Maps, Flickr,

YouTube, Amazon eCommerce, and Twitter, when

combined, produce a variety of interesting solutions, from

finding video game retailers to weather maps. Similarly,

Salesforce.com‘s offers AppExchange, which enables the

sharing of solutions developed by third-party developers on

top of Salesforce.com components.

GRID COMPUTING

Grid computing enables aggregation of distributed resources

and transparently access to them. Most production grids such

as TeraGrid and EGEE seek to share compute and storage

resources distributed across different administrative

domains, with their main focus being speeding up a broad

range of scientific applications, such as climate modeling,

drug design, and protein analysis.

A key aspect of the grid vision realization has been building

standard Web services-based protocols that allow

17

di

s

tributed

res

ource

s

to

be

―

discovered,

acce

ss

ed,

allocated, monitored, accounted for, and billed for, etc., and

in general managed as a single virtual system.‖ The Open

Grid Services Archi- tecture (OGSA) addresses this need for

standardization by defining a set of core capabilities and

behaviors that address key concerns in grid systems.

UTILITY COMPUTING

In utility computing environments, users assign a

―

utility

‖

value

to

their

job

s

,

wher

e

utility

is

a

fixed or

time-varying valuation that captures various QoS constraints

(deadline, importance, satisfaction). The valuation is the

amount they are willing to pay a service provider to satisfy

their demands. The service providers then attempt to

maximize their own utility, where said utility may directly

correlate with their profit. Providers can choose to prioritize

Hardware Virtualization

Cloud computing services are usually backed by large- scale

data centers composed of thousands of computers. Such data

centers are built to serve many users and host many disparate

applications. For this purpose, hardware virtualization can be

considered as a perfect fit to overcome most operational

issues of data center building and maintenance.

The idea of virtualizing a computer system‘s resources,

including processors, memory, and I/O devices, has been

well established for decades, aiming at improving sharing

and utilization of computer systems.

Hardware virtua-lization allows running multiple operating

systems and software stacks on a single physical platform.

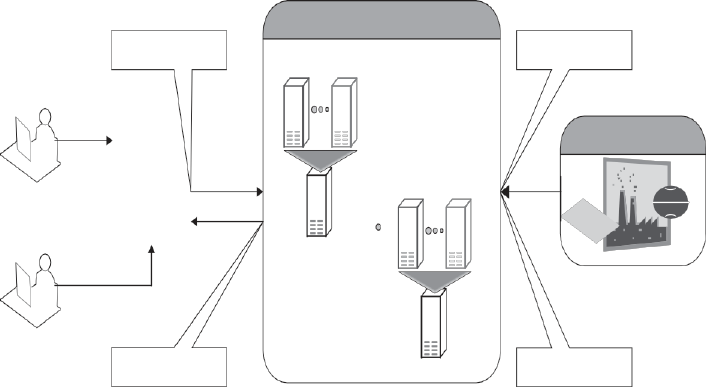

As depicted in Figure 1.2, a software

18

layer, the virtual machine monitor (VMM), also called a

hypervisor, mediates access to the physical hardware

presenting to each guest operating system a virtual machine

(VM), which is a set of virtual platform interfaces .

The advent of several innovative technologies—multi- core

chips, paravir- tualization, hardware-assisted virtualization,

and live migration of VMs—has contributed to an

increasing adoption of virtualization on server systems.

Traditionally, perceived benefits were improvements on

sharing and utilization, better manageability, and higher

reliability.

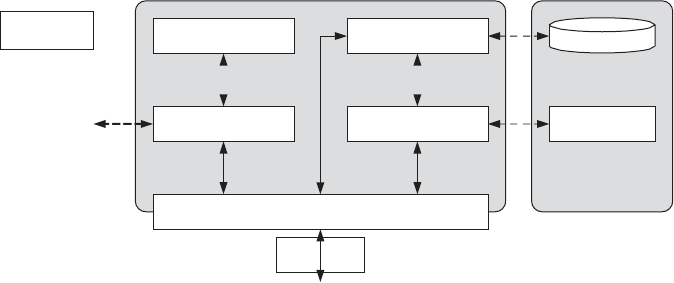

FIGURE 1.2. A hardware virtualized server hosting three

virtual machines, each one running distinct operating system

and user level software stack.

Management of workload in a virtualized system, namely

isolation, consolida-tion, and migration. Workload isolation

is achieved since all program instructions are fully confined

inside a VM, which leads to improvements in security. Better

reliability is also achieved because software failures inside

one VM do not affect others.

Workload migration, also referred to as application

Virtual Machine 1

Virtual Machine 2

Virtual Machine N

User software

User software

User software

App A App X

Data Web

Java

Ruby on

App B

App Y

Linux

Guest OS

Virtual Machine Monitor (Hypervisor)

19

mobility, targets at facilitating hardware maintenance, load

balancing, and disaster recovery. It is done by encapsulating

a guest OS state within a VM and allowing it to be

suspended, fully serialized, migrated to a different platform,

and resumed immediately or preserved to be restored at a

later date. A VM‘s state includes a full disk or partition

image, configuration files, and an image of its RAM.

A number of VMM platforms exist that are the basis of many

utility or cloud computing environments. The most notable

ones, VMWare, Xen, and KVM.

20

Virtual Appliances and the Open Virtualization Format

An application combined with the environment needed to run

it (operating system, libraries, compilers, databases,

application containers, and so forth) is referred to as a

―

virtual

appliance

.‖

P

ackaging

application

environment

s

in

the shape of virtual appliances eases software customization,

configuration, and patching and improves portability. Most

commonly, an appliance is shaped as a VM disk image

associated with hardware requirements, and it can be readily

deployed in a hypervisor.

On-line marketplaces have been set up to allow the exchange

of ready-made appliances containing popular operating

systems and useful software combina- tions, both commercial

and open-source.

Most notably, the VMWare virtual appliance marketplace

allows users to deploy appliances on VMWare hypervi- sors

or on partners public clouds, and Amazon allows developers

to share specialized Amazon Machine Images (AMI) and

monetize their usage on Amazon EC2.

In a multitude of hypervisors, where each one supports a

different VM image format and the formats are incompatible

with one another, a great deal of interoperability issues arises.

For instance, Amazon has its Amazon machine image (AMI)

format, made popular on the Amazon EC2 public cloud.

Other formats are used by Citrix XenServer, several Linux

distributions that ship with KVM, Microsoft Hyper-V, and

VMware ESX.

AUTONOMIC COMPUTING

The increasing complexity of computing systems has

motivated research on autonomic computing, which seeks to

improve systems by decreasing human involvement in their

operation. In other words, systems should manage

21

themselves, with high-level guidance from humans.

Autonomic, or self-managing, systems rely on monitoring

probes and gauges (sensors), on an adaptation engine

(autonomic manager) for computing optimizations based on

monitoring data, and on effectors to carry out changes on the

system. IBM‘s Autonomic Computing Initiative has

contributed to define the four properties of autonomic

systems: self-configuration, self- optimization, self-healing,

and self-protection.

LAYERS AND TYPES OF CLOUDS

Cloud computing services are divided into three classes, according to

the abstraction level of the capability provided and the service model

of providers, namely:

1.

Infrastructure as a Service

2.

Platform as a Service and

3.

Software as a Service.

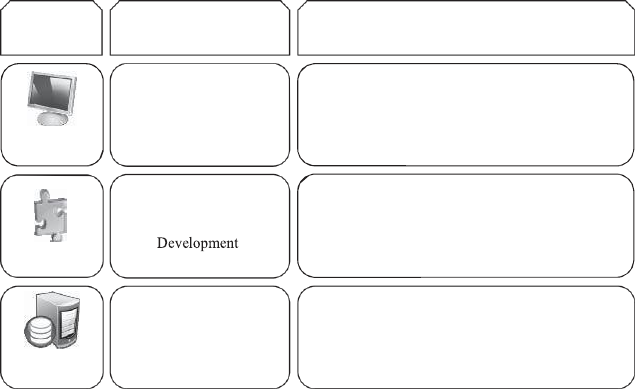

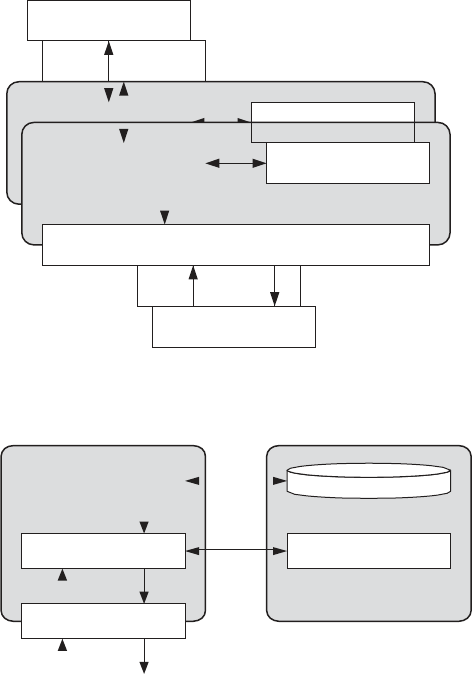

Figure 1.3 depicts the layered organization of the cloud stack from

physical infrastructure to applications.

These abstraction levels can also be viewed as a layered

architecture where services of a higher layer can be composed

from services of the underlying layer. The reference model

explains the role of each layer in an integrated architecture. A

core middleware manages physical resources and the VMs

deployed on top of them; in addition, it provides the required

features (e.g., accounting and billing) to offer multi-tenant pay-

as-you-go services.

Cloud development environments are built on top of

infrastructure services to offer application development and

deployment capabilities; in this level, various programming

models, libraries, APIs, and mashup editors enable the creation

of a range of business, Web, and scientific applications. Once

deployed in the cloud, these applications

22

can be consumed by end users.

Infrastructure as a Service

Offering virtualized resources (computation, storage, and

communication) on demand is known as Infrastructure as a

Service (IaaS).

FIGURE 1.3. The cloud computing stack

.

A cloud infrastructure enables on-demand provisioning of

servers running several choices of operating systems and a

customized software stack. Infrastructure services are

considered to be the bottom layer of cloud computing systems.

Amazon Web Services mainly offers IaaS, which in the case

of its EC2 service means offering VMs with a software stack

that can be customized similar to how an ordinary physical

server would be customized.

Users are given privileges to perform numerous activities to

the server, such as: starting and stopping it, customizing it

Service

Main Access &

Service content

Web Browser

Social networks, Office suites, CRM,

SaaS

Cloud

Cloud Platform

PaaS

Programming languages,

Frameworks,,Mashups editors,

Structured data

Virtual

IaaS

Infrastructure

Compute Servers, Data Storage,

17

23

by installing software packages, attaching virtual disks to it,

and configuring access permissions and firewalls rules.

Platform as a Service

In addition to infrastructure-oriented clouds that provide raw

computing and storage services, another approach is to offer a

higher level of abstraction to make a cloud easily

programmable, known as Platform as a Service (PaaS).

A cloud platform offers an environment on which developers

create and deploy applications and do not necessarily need to

know how many processors or how much memory that

applications will be using. In addition, multiple programming

models and specialized services (e.g., data access,

authentication, and payments) are offered as building blocks

to new applications.

Google App Engine, an example of Platform as a Service,

offers a scalable environment for developing and hosting Web

applications, which should be written in specific programming

languages such as Python or Java, and use the services‘ own

proprietary structured object data store.

Building blocks include an in-memory object cache

(memcache), mail service, instant messaging service (XMPP),

an image manipulation service, and integration with Google

Accounts authentication service.

Software as a Service

Applications reside on the top of the cloud stack. Services

provided by this layer can be accessed by end users through

Web portals. Therefore, consumers are increasingly shifting

from locally installed computer programs to on-line software

services that offer the same functionally.

Traditional desktop applica-tions such as word processing

24

and spreadsheet can now be accessed as a service in the Web.

This model of delivering applications, known as Software as

a Service (SaaS), alleviates the burden of software

maintenance for customers and simplifies development and

testing for providers.

Salesforce.com, which relies on the SaaS model, offers

business productivity applications (CRM) that reside

completely on their servers, allowing costumers to customize

and access applications on demand.

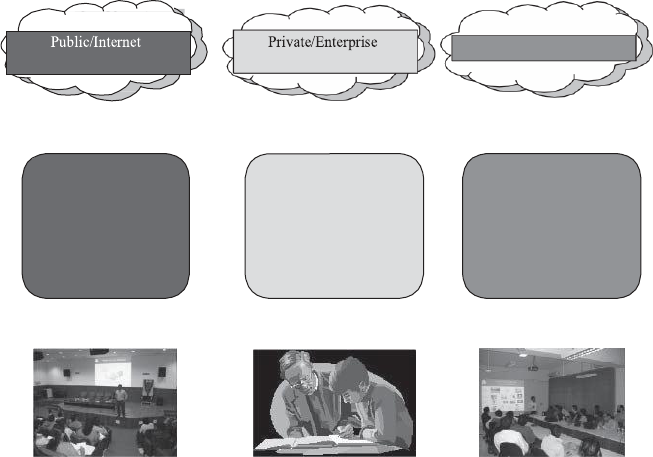

Deployment Models

Although cloud computing has emerged mainly from the appearance

of public computing utilities, other deployment models, with

variations in physical location and distribution, have been adopted. In

this sense, regardless of its service class, a cloud can be classified as

public, private, community, or hybrid based on model of deployment

as shown in Figure 1.4.

Clouds

Hybrid/Mixed Clouds

3rd party,

multi-tenant Cloud

infrastructure

& services:

Cloud computing

model run

within a company‘s

own Data Center/

infrastructure for

internal and/or

Mixed usage of

private and public

Clouds:

Leasing public

cloud services

when private cloud

capacity is

25

FIGURE 1.4. Types of clouds based on deployment models.

P

ublic

cloud

as

a

―

cloud

made

available

in

a

pay-

as-you-go

manner

to

the

general

public

‖

and

private

cloud

as

―

internal

data center of a business or other organization, not made

available to the general public.‖

Establishing a private cloud means restructuring an existing

infrastructure by adding virtualization and cloud-like

interfaces. This allows users to interact with the local data

center while experiencing the same advantages of public

clouds, most notably self-service interface, privileged access

to virtual servers, and per-usage metering and billing.

A

community

cloud

is

―

shared

by

s

everal

organization

s

and

a specific community that has shared concerns (e.g., mission,

security require-ments, policy, and compliance

considerations) .

A hybrid cloud takes shape when a private cloud is

supplemented with computing capacity from public clouds.

The approach of temporarily renting capacity to handle spikes

in load is known as cloud-bursting.

FEATURES OF A CLOUD

i.

Self-service

ii.

Per-usage metered and billed

iii.

Elastic

iv.

Customizable

SELF-SERVICE

Consumers of cloud computing services expect on-demand,

nearly instant access to resources. To support this

26

expectation, clouds must allow self-service access so that

customers can request, customize, pay, and use services

without intervention of human operators.

PER-USAGE METERING AND BILLING

Cloud computing eliminates up-front commitment by users,

allowing them to request and use only the necessary amount.

Services must be priced on a short- term basis (e.g., by the

hour), allowing users to release (and not pay for) resources

as soon as they are not needed. For these reasons, clouds

must implement features to allow efficient trading of service

such as pricing, accounting, and billing.

Metering should be done accordingly for different types of

service (e.g., storage, processing, and bandwidth) and usage

promptly reported, thus providing greater transparency.

ELASTICITY

Cloud computing gives the illusion of infinite computing resources

available on demand. Therefore users expect clouds to rapidly

provide resources in any Quantity at any time.

In particular, it is expected that the additional resources can be

i.

Provisioned, possibly automatically, when an application

load increases and

ii.

Released when load decreases (scale up and down).

CUSTOMIZATION

In a multi-tenant cloud a great disparity between user needs is

often the case. Thus, resources rented from the cloud must be

highly customizable. In the case of infrastructure services,

customization means allowing users to deploy specialized

virtual appliances and to be given privileged (root) access to

the virtual servers.

27

CLOUD INFRASTRUCTURE MANAGEMENT

A key challenge IaaS providers face when building a cloud

infrastructure is managing physical and virtual resources, namely

servers, storage, and net- works.

The software toolkit responsible for this orchestration is called

a virtual infrastructure manager (VIM). This type of software

resembles a traditional operating system but instead of dealing

with a single computer, it aggregates resources from multiple

computers, presenting a uniform view to u

s

er

and

application

s

.

The

term

―

cloud

operating

syste

m‖

is

also used

to refer to it.

The availability of a remote cloud-like interface and the ability

of managing many users and their permissions are the

primary features that would distinguish cloud toolkits from

VIMs.

Virtually all VIMs we investigated present a set of basic features

related to managing the life cycle of VMs, including networking

groups of VMs together and setting up virtual disks for VMs.

FEATURES AVAILABLE IN VIMS

VIRTUALIZATION SUPPORT:

The multi-tenancy aspect of clouds requires multiple

customers with disparate requirements to be served by a single

hardware infrastructure. Virtualized resources (CPUs,

memory, etc.) can be sized and resized with certain flexibility.

These features make hardware virtualization, the ideal

technology to create a virtual infrastructure that partitions a

data center among multiple tenants.

28

SELF-SERVICE, ON-DEMAND RESOURCE,

PROVISIONING:

Self-service access to resources has been perceived as one the

most attractive features of clouds. This feature enables users

to directly obtain services from clouds, such as spawning the

creation of a server and tailoring its software, configurations,

and security policies, without interacting with a human

system administrator.

Thi

s

cap-

ability

―

eliminates

the

need

for more

time-

consuming, labor-intensive, human- driven procurement

processes familiar to many in IT‖. Therefore, exposing a self-

service interface, through which users can easily interact with

the system, is a highly desirable feature of a VI manager.

MULTIPLE BACKEND HYPERVISORS:

Different virtualization models and tools offer different

benefits, drawbacks, and limitations. Thus, some VI managers

provide a uniform management layer regardless of the

virtualization technology used. This characteristic is more

visible in open-source VI managers, which usually provide

pluggable drivers to interact with multiple hypervisors.

STORAGE VIRTUALIZATION:

Virtualizing storage means abstracting logical storage from

physical storage. By consolidating all available storage

devices in a data center, it allows creating virtual disks

independent from device and location. Storage devices are

commonly organized in a storage area network (SAN) and

attached to servers via protocols such as Fibre Channel, iSCSI,

and NFS; a storage controller provides the layer of abstraction

between virtual and physical storage.

In the VI management sphere, storage virtualization support

is often restricted to commercial products of

29

companies such as VMWare and Citrix. Other products

feature ways of pooling and managing storage devices, but

administrators are still aware of each individual device.

Interface to Public Clouds. Researchers have perceived that

extending the capacity of a local in-house computing

infrastructure by borrowing resources from public clouds is

advantageous. In this fashion, institutions can make good use

of their available resources and, in case of spikes in demand,

extra load can be offloaded to rented resources .

A VI manager can be used in a hybrid cloud setup if it offers

a driver to manage the life cycle of virtualized resources

obtained from external cloud providers. To the applications,

the use of leased resources must ideally be transparent.

Virtual Networking. Virtual networks allow creating an

isolated network on top of a physical infrastructure

independently from physical topology and locations . A

virtual LAN (VLAN) allows isolating traffic that shares a

switched network, allowing VMs to be grouped into the same

broadcast domain. Additionally, a VLAN can be configured

to block traffic originated from VMs from other networks.

Similarly, the VPN (virtual private network) concept is used

to describe a secure and private overlay network on top of a

public network (most commonly the public Internet).

DYNAMIC RESOURCE ALLOCATION:

In cloud infrastructures, where applications have variable

and dynamic needs, capacity management and demand

predic-tion are especially complicated. This fact triggers the

need for dynamic resource allocation aiming at obtaining a

timely match of supply and demand.

A number of VI managers include a dynamic resource

allocation feature that continuously monitors utilization

30

across resource pools and reallocates available resources

among VMs according to application needs.

VIRTUAL CLUSTERS:

Several VI managers can holistically manage groups of VMs.

This feature is useful for provisioning computing virtual

clusters on demand, and interconnected VMs for multi-tier

Internet applications.

RESERVATION AND NEGOTIATION MECHANISM:

When users request computational resources to available at

a specific time, requests are termed advance reservations

(AR), in contrast to best-effort requests, when users request

resources whenever available.

HIGH AVAILABILITY AND DATA RECOVERY:

The high availability (HA) feature of VI managers aims at

minimizing application downtime and preventing business

disruption.

INFRASTRUCTURE AS A SERVICE PROVIDERS

Public Infrastructure as a Service providers commonly offer virtual

servers containing one or more CPUs, running several choices of

operating systems and a customized software stack.

FEATURES

The most relevant features are:

i.

Geographic distribution of data centers;

ii.

Variety of user interfaces and APIs to access the system;

iii.

Specialized components and services that aid particular

applications (e.g., load- balancers, firewalls);

iv.

Choice of virtualization platform and operating systems; and

v.

Different billing methods and period (e.g., prepaid vs. post-

paid, hourly vs. monthly).

31

GEOGRAPHIC PRESENCE:

Ava

ilability

zone

s

are

―

distinct

location

s

that

are

engineered to be insulated from failures in other availability

zones and provide inexpensive, low-latency network

connectivity to other availability zones in the same region

.‖

Region

s

,

in

turn,

―

are

geographi-

cally

di

s

per

s

ed and will

be in separate geographic areas or countries.‖

USER INTERFACES AND ACCESS TO SERVERS:

A public IaaS provider must provide multiple access means to

its cloud, thus catering for various users and their preferences.

Different types of user interfaces (UI) provide different levels

of abstraction, the most common being graphical user

interfaces (GUI), command-line tools (CLI), and Web service

(WS) APIs.

GUIs are preferred by end users who need to launch,

customize, and monitor a few virtual servers and do not

necessary need to repeat the process several times.

ADVANCE RESERVATION OF CAPACITY:

Advance reservations allow users to request for an IaaS

provider to reserve resources for a specific time frame in the

future, thus ensuring that cloud resources will be available at

that time.

Amazon Reserved Instances is a form of advance reservation

of capacity, allowing users to pay a fixed amount of money

in advance to guarantee resource availability at anytime

during an agreed period and then paying a discounted hourly

rate when resources are in use.

AUTOMATIC SCALING AND LOAD BALANCING:

It allow users to set conditions for when they want their

applications to scale up and down, based on application-

specific metrics such as transactions per second, number of

simultaneous users, request latency, and so forth.

32

When the number of virtual servers is increased by automatic

scaling, incoming traffic must be automatically distributed

among the available servers. This activity enables applications

to promptly respond to traffic increase while also achieving

greater fault tolerance.

SERVICE-LEVEL AGREEMENT:

Service-level agreements (SLAs) are offered by IaaS

providers to express their commitment to delivery of a certain

QoS. To customers it serves as a warranty. An SLA usually

include availability and performance guarantees.

HYPERVISOR AND OPERATING SYSTEM CHOICE:

IaaS offerings have been based on heavily customized open-

source Xen deployments. IaaS providers needed expertise in

Linux, networking, virtualization, metering, resource

management, and many other low-level aspects to

successfully deploy and maintain their cloud offerings.

PLATFORM AS A SERVICE PROVIDERS

Public Platform as a Service providers commonly offer a

development and deployment environment that allow users to

create and run their applications with little or no concern to

low-level details of the platform.

FEATURES

Programming Models, Languages, and Frameworks.

Programming mod-els made available by IaaS providers

define how users can express their applications using higher

levels of abstraction and efficiently run them on the cloud

platform.

33

Persistence Options. A persistence layer is essential to allow

applications to record their state and recover it in case of

crashes, as well as to store user data.

SECURITY, PRIVACY, AND TRUST

Security and privacy affect the entire cloud computing stack,

since there is a massive use of third-party services and

infrastructures that are used to host important data or to

perform critical operations. In this scenario, the trust toward

providers is fundamental to ensure the desired level of

privacy for applications hosted in the cloud.

When data are moved into the Cloud, providers may choose to

locate them anywhere on the planet. The physical location of

data centers determines the set of laws that can be applied to

the management of data.

DATA LOCK-IN AND STANDARDIZATION

The Cloud Computing Interoperability Forum (CCIF) was

formed by organizations such as Intel, Sun, and Cisco in

order

to

―

enable

a

global

cloud

computing

eco

s

y

s

tem

whereby organizations are able to seamlessly work together

for the purposes for wider industry adoption of cloud

computing technology.‖ The development of the Unified

Cloud Interface (UCI) by CCIF aims at creating a standard

programmatic point of access to an entire cloud

infrastructure

.

In the hardware virtualization sphere, the Open Virtual

Format (OVF) aims at facilitating packing and distribution

of software to be run on VMs so that virtual appliances can

be made portable

34

AVAILABILITY, FAULT-TOLERANCE, AND DISASTER

RECOVERY

It is expected that users will have certain expectations about

the service level to be provided once their applications are

moved to the cloud. These expectations include availability

of the service, its overall performance, and what measures

are to be taken when something goes wrong in the system

or its components. In summary, users seek for a warranty

before they can comfortably move their business to the

cloud.

SLAs, which include QoS requirements, must be ideally set

up between customers and cloud computing providers to act

as warranty. An SLA specifies the details of the service to

be provided, including availability and performance

guarantees. Additionally, metrics must be agreed upon by

all parties, and penalties for violating the expectations must

also be approved.

RESOURCE MANAGEMENT AND ENERGY-EFFICIENCY

The multi-dimensional nature of virtual machines complicates

the activity of finding a good mapping of VMs onto available

physical hosts while maximizing user utility.

Dimensions to be considered include: number of CPUs,

amount of memory, size of virtual disks, and network

bandwidth. Dynamic VM mapping policies may leverage the

ability to suspend, migrate, and resume VMs as an easy way

of preempting low-priority allocations in favor of higher-

priority ones.

Migration of VMs also brings additional challenges such as

detecting when to initiate a migration, which VM to migrate,

and where to migrate. In addition, policies may take

advantage of live migration of virtual machines to relocate

data center load without significantly disrupting running

services.

35

MIGRATING INTO A CLOUD

The promise of cloud computing has raised the IT

expectations of small and medium enterprises beyond

measure. Large companies are deeply debating it. Cloud

computing is a disruptive model of IT whose innovation is

part technology and part business model in short a

―

disruptive

techno-commercial

model

‖

of IT.

We

propo

s

e

the

followi

ng

definition

of

cloud

computing:

―

It

is a techno-business disruptive model of using distributed large-

scale data centers either private or public or hybrid offering

customers a scalable virtualized infrastructure or an abstracted set

of services qualified by service-level agreements (SLAs) and

charged only by the abstracted IT resources consumed.‖

FIGURE 2.1. The promise of the cloud computing services.

In Figure 2.1, the promise of the cloud both on the business

front (the attractive cloudonomics) and the technology front

widely aided the CxOs to spawn out several non-mission

critical IT needs from the ambit of their captive traditional

data centers to the appropriate cloud service.

Several small and medium business enterprises, however,

leveraged the cloud much beyond the cautious user. Many

startups opened their IT departments exclusively using cloud

services very successfully and with high ROI. Having

observed these successes, several large enterprises have

started successfully running pilots for leveraging the cloud.

Cloudonomics

‗Pay per use‘ – Lower Cost Barriers

On Demand Resources –Autoscaling

Capex vs OPEX – No capital expenses (CAPEX) and only operational expenses OPEX.

SLA driven operations – Much Lower TCO

Attractive NFR support: Availability, Reliability

Technology

‗Infinite‘ Elastic availability – Compute/Storage/Bandwidth

Automatic Usage Monitoring and Metering

Jobs/ Tasks Virtualized and Transparently ‗Movable‘

Integration and interoperability ‗support‘ for hybrid ops

Transparently encapsulated & abstracted IT features.

36

Many large enterprises run SAP to manage their operations.

SAP itself is experimenting with running its suite of products:

SAP Business One as well as SAP Netweaver on Amazon

cloud offerings.

THE CLOUD SERVICE OFFERINGS AND DEPLOYMENT

MODELS

Cloud computing has been an attractive proposition both for

the CFO and the CTO of an enterprise primarily due its ease

of usage. This has been achieved by large data center service

vendors or now better known as cloud service vendors again

primarily due to their scale of operations.

FIGURE 2.2. The cloud computing service offering and

deployment models.

IaaS

Abstract Compute/Storage/Bandwidth Resources

Amazon Web Services[10,9] – EC2, S3, SDB, CDN, CloudWatch

IT Folks

PaaS

Abstracted Programming Platform with encapsulated infrastructure

Programmers

• Google Apps Engine(Java/Python), Microsoft Azure, Aneka[13]

SaaS

Application with encapsulated infrastructure & platform

Cloud Application Deployment & Consumption Models

Public Clouds

Hybrid Clouds

Private Clouds

37

FIGURE 2.3. ‘Under the hood’ challenges of the cloud computing

services implementations.

BROAD APPROACHES TO MIGRATING INTO THE CLOUD

Cloud Economics deals with the economic rationale for

leveraging the cloud and is central to the success of cloud-

based enterprise usage. Decision-makers, IT managers, and

software architects are faced with several dilemmas when

planning for new Enterprise IT initiatives.

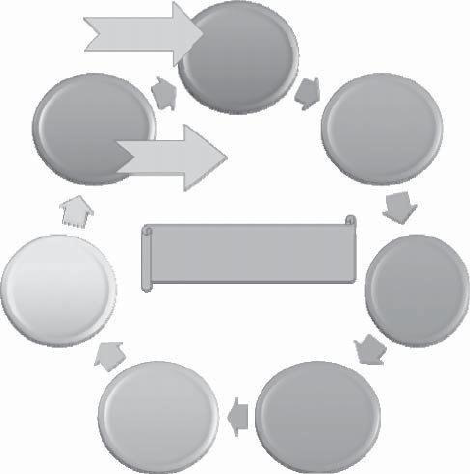

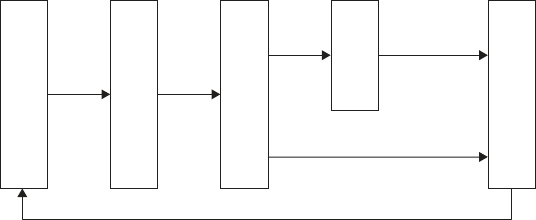

THE SEVEN-STEP MODEL OF MIGRATION INTO A CLOUD

Typically migration initiatives into the cloud are implemented

in phases or in stages. A structured and process-oriented

approach to migration into a cloud has several advantages of

capturing within itself the best practices of many migration

projects.

1.

Conduct Cloud Migration Assessments

2.

Isolate the Dependencies

Distributed System Fallacies

Challenges in Cloud Technologies

and the Promise of the Cloud

Full Network Reliability

Security

Zero Network Latency

Infinite Bandwidth

Secure Network

Performance Monitoring

Consistent & Robust Service abstractions

Meta Scheduling

Energy efficient load balancing

No Topology changes

Centralized Administration

Scale management

SLA & QoS Architectures

Zero Transport Costs

Interoperability & Portability

Homogeneous Networks & Systems

Green IT

38

3.

Map the Messaging & Environment

4.

Re-architect & Implement the lost Functionalities

5.

Leverage Cloud Functionalities & Features

6.

Test the Migration

7.

Iterate and Optimize

The Seven-Step Model of Migration into the Cloud. (Source:

Infosys Research.)

FIGURE 2.5. The iterative Seven-step Model of Migration

into the Cloud. (Source: Infosys Research.)

START

Assess

Optimize

Isolate

END

The Iterative Seven Step

Test

Map

Augment

Re-

39

Migration Risks and Mitigation

The biggest challenge to any cloud migration project is how

effectively the migration risks are identified and mitigated. In

the Seven-Step Model of Migration into the Cloud, the

process step of testing and validating includes

efforts to identify the key migration risks. In the optimization

step, we address various approaches to mitigate the identified

migration risks.

Migration risks for migrating into the cloud fall under two

broad categories: the general migration risks and the security-

related migration risks. In the former we address several issues

including performance monitoring and tuning—essentially

identifying all possible production level deviants; the business

continuity and disaster recovery in the world of cloud

computing service; the compliance with standards and

governance issues; the IP and licensing issues; the quality

of service (QoS) parameters as well as the corresponding

SLAs committed to; the ownership, transfer, and storage of

data in the application; the portability and interoperability

issues which could help mitigate potential vendor lock-ins; the

issues that result in trivializing and non comprehencing the

complexities of migration that results in migration failure and

loss of senior management‘s business confidence in these

efforts.

ENRICHING THE ‘INTEGRATION AS A SERVICE’

PARADIGM FOR THE CLOUD ERA

THE EVOLUTION OF SaaS

SaaS paradigm is on fast track due to its innate powers

and potentials. Executives, entrepreneurs, and end-users

are ecstatic about the tactic as well as strategic success

of the emerging and evolving SaaS paradigm. A number

of positive and progressive developments started to grip

this model. Newer

40

resources and activities are being consistently readied

to be delivered as a IT as a Service (ITaaS) is the most

recent and efficient delivery method in the decisive IT

landscape. With the meteoric and mesmerizing rise of

the service orientation principles, every single IT

resource, activity and infrastructure is being viewed

and visualized as a service that sets the tone for the

grand unfolding of the dreamt service era. This is

accentuated due to the pervasive Internet.

Integration as a service (IaaS) is the budding and

distinctive capability of clouds in fulfilling the business

integration requirements. Increasingly business

applications are deployed in clouds to reap the business

and technical benefits. On the other hand, there are still

innumerable applications and data sources locally

stationed and sustained primarily due to the security

reason. The question here is how to create a seamless

connectivity between those hosted and on-premise

applications to empower them to work together.

IaaS over- comes these challenges by smartly utilizing

the time-tested business-to-business (B2B) integration

technology as the value-added bridge between SaaS

solutions and in-house business applications.

1. The Web is the largest digital information superhighway

2.

The Web is the largest repository of all kinds of resources

such as web pages, applications comprising enterprise

components, business services, beans, POJOs, blogs,

corporate data, etc.

3.

The Web is turning out to be the open, cost-effective and

generic business execution platform (E-commerce, business,

auction, etc. happen in the web for global users) comprising a

wider variety of containers, adaptors, drivers, connectors, etc.

41

4.

The Web is the global-scale communication infrastructure

(VoIP, Video conferencing, IP TV etc,)

5.

The Web is the next-generation discovery, Connectivity, and

integration middleware

Thus the unprecedented absorption and adoption of the Internet is

the key driver for the continued success of the cloud computing.

THE CHALLENGES OF SaaS PARADIGM

As with any new technology, SaaS and cloud concepts too suffer a

number of limitations. These technologies are being diligently

examined for specific situations and scenarios. The prickling and

tricky issues in different layers and levels are being looked into. The

overall views are listed out below. Loss or lack of the following

features deters the massive adoption of clouds

1.

Controllability

2. Visibility & flexibility

3.

Security and Privacy

4.

High Performance and Availability

5.

Integration and Composition

6.

Standards

A number of approaches are being investigated for

resolving the identified issues and flaws. Private cloud, hybrid

and the latest community cloud are being prescribed as the

solution for most of these inefficiencies and deficiencies. As

rightly pointed out by someone in his weblogs, still there are

miles to go. There are several companies focusing on this

issue.

42

Integration Conundrum

. While SaaS applications offer

outstanding value in terms of features and functionalities relative

to cost, they have introduced several challenges specific to

integration. The first issue is that the majority of SaaS applications

are point solutions and service one line of business.

APIs are Insufficient:

Many SaaS providers have responded to the

integration challenge by developing application programming

interfaces (APIs). Unfortunately, accessing and managing data via

an API requires a significant amount of coding as well as

maintenance due to frequent API modifications and updates.

Data Transmission Security:

SaaS providers go to great length to

ensure that customer data is secure within the hosted environment.

However, the need to transfer data from on-premise systems or

applications behind the firewall with SaaS applications hosted

outside of the client‘s data center poses new challenges that need to

be addressed by the integration solution of choice.

The Impacts of Cloud:.

On the infrastructural front, in the recent

past, the clouds have arrived onto the scene powerfully and have

extended the horizon and the boundary of business applications,

events and data. That is, business applications, development

platforms etc. are getting moved to elastic, online and on-demand

cloud infrastructures. Precisely speaking, increasingly for business,

technical, financial and green reasons, applications and services are

being readied and relocated to highly scalable and available

clouds.

THE INTEGRATION METHODOLOGIES

Excluding the custom integration through hand-coding, there are

three types for cloud integration

1.

Traditional Enterprise Integration Tools can be empowered

with special connectors to access Cloud-located

Applications—This is the most likely approach for IT

43

organizations, which have already invested a lot in

integration suite for their application integration needs.

2.

Traditional Enterprise Integration Tools are hosted in the

Cloud—This approach is similar to the first option except that

the integration software suite is now hosted in any third-party

cloud infrastructures so that the enterprise does not worry

about procuring and managing the hardware or installing the

integration software. This is a good fit for IT organizations that

outsource the integration projects to IT service

organizations and systems integrators, who have the skills

and resources to create and deliver integrated systems.

Integration-as-a-Service (IaaS) or On-Demand Integration

Offerings— These are SaaS applications that are designed to deliver

the integration service securely over the Internet and are able to

integrate cloud applications with the on-premise systems, cloud-to-

cloud applications.

SaaS administrator or business analyst as the primary resource for

managing and maintaining their integration work. A good example

is Informatica On-Demand Integration Services.

In the integration requirements can be realised using any one of the

following methods and middleware products.

1.

Hosted and extended ESB (Internet service bus / cloud

integration bus)

2.

Online Message Queues, Brokers and Hubs

3.

Wizard and configuration-based integration platforms (Niche

integration solutions)

4.

Integration Service Portfolio Approach

5.

Appliance-based Integration (Standalone or Hosted)

44

CHARACTERISTICS OF INTEGRATION SOLUTIONS

AND PRODUCTS.

The key attri-butes of integration platforms and backbones gleaned

and gained from integration projects experience are connectivity,

semantic mediation, Data mediation, integrity, security, governance

etc

●

Connectivity refers to the ability of the integration engine to

engage with both the source and target systems using

available native interfaces. This means leveraging the

interface that each provides, which could vary from

standards-based interfaces, such as Web services, to older

and proprietary interfaces. Systems that are getting

connected are very much responsible for the externalization

of the correct information and the internalization of

information once processed by the integration engine.

●

Semantic Mediation refers to the ability to account for the

differences between application semantics between two or

more systems. Semantics means how information gets

understood, interpreted and represented within information

systems. When two different and distributed systems are

linked, the differences between their own yet distinct

semantics have to be covered.

●

Data Mediation converts data from a source data format

into destination data format. Coupled with semantic

mediation, data mediation or data transformation is the

process of converting data from one native format on the

source system, to another data format for the target

system.

●

Data Migration is the process of transferring data between

storage types, formats, or systems. Data migration means

that the data in the old system is mapped to the new systems,

typically leveraging data extraction and data loading

technologies.

45

●

Data Security means the ability to insure that information

extracted from the source systems has to securely be placed

into target systems. The integration method must leverage the

native security systems of the source and target systems,

mediate the differences, and provide the ability to transport

the information safely between the connected systems.

●

Data Integrity means data is complete and consistent. Thus,

integrity has to be guaranteed when data is getting mapped

and maintained during integration operations, such as data

synchronization between on-premise and SaaS-based

systems.

●

Governance refers to the processes and technologies that

surround a system or systems, which control how those

systems are accessed and leveraged. Within the integration

perspective, governance is about mana- ging changes to core

information resources, including data semantics, structure,

and interfaces.

THE ENTERPRISE CLOUD COMPUTING PARADIGM:

Relevant Deployment Models for Enterprise Cloud Computing

There are some general cloud deployment models that are accepted by

the majority of cloud stakeholders today:

1)

Public clouds are provided by a designated service provider for general

public under a utility based pay-per-use consumption model. The cloud

resources are hosted generally on the service provider‘s premises.

Popular examples of public clouds are Amazon‘s AWS (EC2, S3 etc.),

Rackspace Cloud Suite, and Microsoft‘s Azure Service Platform.

2)

Private clouds are built, operated, and managed by an organization for

its internal use only to support its business operations exclusively.

Public,private, and government organizations worldwide are adopting

46

this model to exploit the cloud benefits like flexibility, cost reduction,

agility and so on.

3)

Virtual private clouds are a derivative of the private cloud deployment

model but are further characterized by an isolated and secure segment

of resources, created as an overlay on top of public cloud infrastructure

using advanced network virtualization capabilities. Some of the public

cloud vendors that offer this capability include Amazon Virtual Private

Cloud , OpSource Cloud , and Skytap Virtual Lab.

4)

Community clouds are shared by several organizations and support a

specific community that has shared concerns (e.g., mission, security

requirements, policy, and compliance considerations). They may be

managed by the organizations or a third party and may exist on premise

or off premise. One example of this is OpenCirrus formed by HP,Intel,

Yahoo, and others.

5)

Managed clouds arise when the physical infrastructure is owned by

and/or physically located in the organization‘s data centers with an

extension of management and security control plane controlled by the

managed service provider. This deployment model isn‘t widely agreed

upon, however, some vendors like ENKI and NaviSite‘s NaviCloud

offers claim to be managed cloud offerings.

6)

Hybrid clouds are a composition of two or more clouds (private,

community,or public) that remain unique entities but are bound

together by standardized or proprietary technology that enables data

and application.

47

UNIT-3

VIRTUAL MACHINES PROVISIONING AND

MIGRATION SERVICES

ANALOGY FOR VIRTUAL MACHINE PROVISIONING:

•

Historically, when there is a need to install a new server for a

certain workload to provide a particular service for a client, lots

of effort was exerted by the IT administrator, and much time was

spent to install and provision a new server. 1) Check the

inventory for a new machine, 2) get one, 3) format, install OS

required, 4) and install services; a server is needed along with

lots of security batches and appliances.

•

Now, with the emergence of virtualization technology and the

cloud computing IaaS model:

•

It is just a matter of minutes to achieve the same task. All you

need is to provision a virtual server through a self-service

interface with small steps to get what you desire with the

required specifications. 1) provisioning this machine in a public

cloud like Amazon Elastic Compute Cloud (EC2), or 2) using a

virtualization management software package or a private cloud

management solution installed at your data center in order to

provision the virtual machine inside the organization and within

the private cloud setup.

Analogy for Migration Services:

•

Previously, whenever there was a need for performing a

server‘s upgrade or performing maintenance tasks, you would

exert a lot of time and effort, because it is an expensive

operation to maintain or upgrade a main server that has lots of

applications and users.

•

Now, with the advance of the revolutionized virtualization

technology and migration services associated with

48

hypervisors‘ capabilities, these tasks (maintenance, upgrades,

patches, etc.) are very easy and need no time to accomplish.

•

Provisioning a new virtual machine is a matter of minutes,

saving lots of time and effort, Migrations of a virtual machine is

a matter of millisecondsVirtual Machine Provisioning and

Manageability

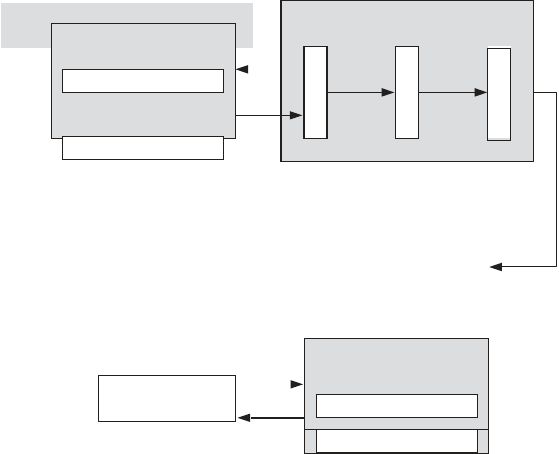

VIRTUAL MACHINE LIFE CYCLE

The cycle starts by a request delivered to the IT department,

stating the requirement for creating a new server for a particular

service.

This request is being processed by the IT administration to start

seeing the servers‘ resource pool, matching these resources with

requirements

Starting the provision of the needed virtual machine.

Once it provisioned and started, it is ready to provide the required

service according to an SLA.

Virtual is being released; and free resources.

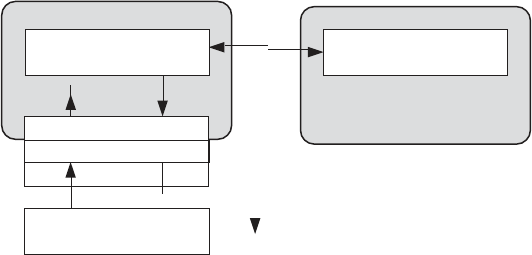

FIG.3.1 VMS LIFE CYCLE

49

VM PROVISIONING PROCESS

•

The common and normal steps of provisioning a virtual server are

as follows:

•

Firstly, you need to select a server from a pool of available servers

(physical servers with enough capacity) along with the appropriate

OS template you need to provision the virtual machine.

•

Secondly, you need to load the appropriate software (operating

System you selected in the previous step, device drivers,

middleware, and the needed applications for the service required).

•

Thirdly, you need to customize and configure the machine (e.g., IP

address, Gateway) to configure an associated network and storage

resources.

•

Finally, the virtual server is ready to start with its newly loaded

software.

To summarize, server provisioning is defining server‘s

configuration based on the organization requirements, a hardware,

and software component (processor, RAM, storage, networking,

operating system, applications, etc.).

Normally, virtual machines can be provisioned by manually

installing an operating system, by using a preconfigured VM

template, by cloning an existing VM, or by importing a physical

server or a virtual server from another hosting platform. Physical

servers can also be virtualized and provisioned using P2V

(Physical to Virtual) tools and techniques (e.g., virt- p2v).

After creating a virtual machine by virtualizing a physical server,

or by building a new virtual server in the virtual environment, a

template can be created out of it.

Most virtualization management vendors (VMware, XenServer,

etc.) provide the data center‘s administration with the ability to do

such tasks in an easy way.

50

LIVE MIGRATION AND HIGH AVAILABILITY

Live migration (which is also called hot or real-time

migration) can be defined as the movement of a virtual machine

from one physical host to another while being powered on.

FIG.3.2 VIRTUAL MACHINE PROVISIONING PROCESS

ON THE MANAGEMENT OF VIRTUAL MACHINES

FOR CLOUD INFRASTRUCTURES

THE ANATOMY OF CLOUD INFRASTRUCTURES

Here we focuses on the subject of IaaS clouds and, more specifically, on

the efficient management of virtual machines in this type of cloud. There

are many commercial IaaS cloud providers in the market, such as those

cited earlier, and all of them share five characteristics:

(i)

They provide on-demand provisioning of computational resources;

(ii)

They use virtualization technologies to lease these resources;

(iii)

They provide public and simple remote interfaces to manage those

resources;

(iv)

They use a pay-as-you-go cost model, typically charging by the

hour;

51

(v)

They operate data centers large enough to provide a seemingly

unlimited amount of re

s

ource

s

to their client

s

(

us

ually touted as

―

infinite

capacity

‖

or

―

unlimited elasticity

‖

). Private and hybrid clouds share

these same characteristics, but instead of selling capacity over publicly

accessible interfaces, focus on providing capacity to an organization‘s

internal users.

DISTRIBUTED MANAGEMENT OF VIRTUAL

INFRASTRUCTURES

VM Model and Life Cycle in OpenNebula The life cycle of a VM within

OpenNebula follows several stages:

Resource Selection. Once a VM is requested to OpenNebula, a

feasible placement plan for the VM must be made. OpenNebula‘s

default scheduler provides an implementation of a rank

scheduling policy, allowing site administrators to configure the

scheduler to prioritize the resources that are more suitable for the

VM, using information from the VMs and the physical hosts. In

addition, OpenNebula can also use the Haizea lease manager to

support more complex scheduling policies.

Resource Preparation. The disk images of the VM are transferred

to the target physical resource. During the boot process, the VM

is contextualized, a process where the disk images are specialized

to work in a given environment. For example, if the VM is part of

a group of VMs offering a service (a compute cluster, a DB-based

application, etc.), contextualization could involve setting up the

network and the machine hostname, or registering the new VM

with a service (e.g., the head node in a compute cluster). Different

techniques are available to contextualize a worker node, including

use of an automatic installation system (for instance, Puppet or

Quattor), a context server, or access to a disk image with the

context data for the worker node (OVF recommendation).

VM Termination. When the VM is going to shut down,

OpenNebula can transfer back its disk images to a known

location. This way, changes in the VM can be kept for a future

use.

52

ENHANCING CLOUD COMPUTING

ENVIRONMENTS USING A CLUSTER AS A

SERVICE

RVWS DESIGN

Dynamic Attribute Exposure

There are two categories of dynamic attributes addressed in the

RVWS framework: state and characteristic. State attributes

cover the current activity of the service and its resources, thus

indicating readiness. For example, a Web service that exposes

a cluster (itself a complex resource) would most likely have a

dynamic state attribute that indicates how many nodes in the

cluster are busy and how many are idle.