CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

X

Test Information

Guide:

College-Level

Examination

Program

®

2015-16

Principles of

Marketing

© 2015 The College Board. All rights reserved. College Board, College-Level Examination

Program, CLEP, and the acorn logo are registered trademarks of the College Board.

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

CLEP TEST INFORMATION GUIDE

FOR PRINCIPLES OF MARKETING

History of CLEP

Since 1967, the College-Level Examination Program

(CLEP

®

) has provided over six million people with

the opportunity to reach their educational goals.

CLEP participants have received college credit for

knowledge and expertise they have gained through

prior course work, independent study or work and

life experience.

Over

the years, the CLEP examinations have evolved

to keep pace with changing curricula and pedagogy.

Typically, the examinations represent material taught

in introductory college-level courses from all areas

of the college curriculum. Students may choose from

33 different subject areas in which to demonstrate

their mastery of college-level material.

Today, more than 2,900 colleges and universities

recognize and grant credit for CLEP.

Philosophy of CLEP

Promoting access to higher education is CLEP’s

foundation. CLEP offers students an opportunity to

demonstrate and receive validation of their

college-level skills and knowledge. Students who

achieve an appropriate score on a CLEP exam can

enrich their college experience with higher-level

courses in their major field of study, expand their

horizons by taking a wider array of electives and

avoid repetition of material that they already know.

CLEP Participants

CLEP’s test-taking population includes people of all

ages and walks of life. Traditional 18- to 22-year-old

students, adults just entering or returning to school,

high-school students, home-schoolers and

international students who need to quantify their

knowledge have all been assisted by CLEP in

earning their college degrees. Currently, 59 percent

of CLEP’s National (civilian) test-takers are women

and 46 percent are 23 years of age or older.

For over 30 years, the College Board has worked to

provide government-funded credit-by-exam

opportunities to the military through CLEP. Military

service members are fully funded for their CLEP exam

fees. Exams are administered at military installations

worldwide through computer-based testing programs.

Approximately one-third of all CLEP candidates are

military service members.

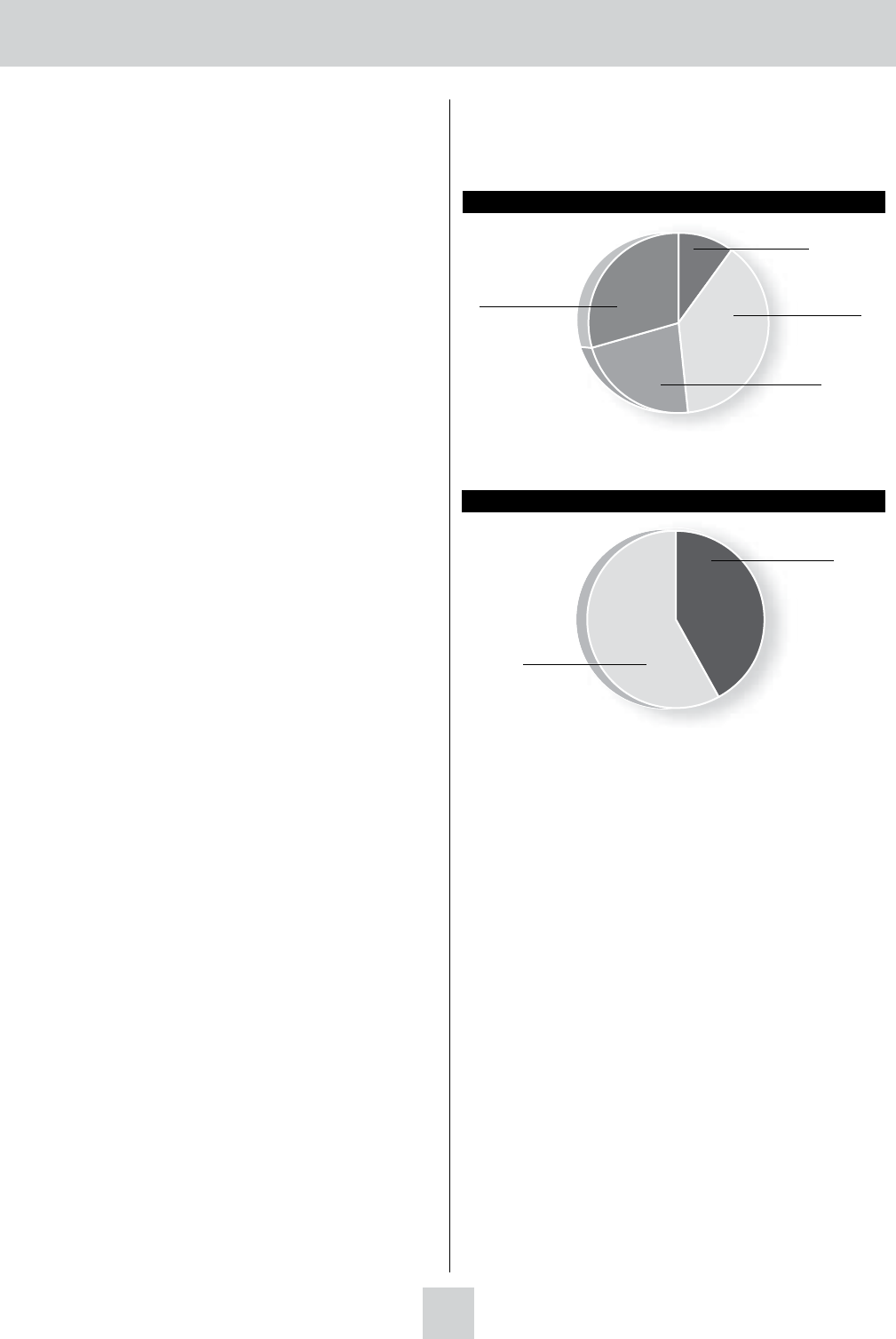

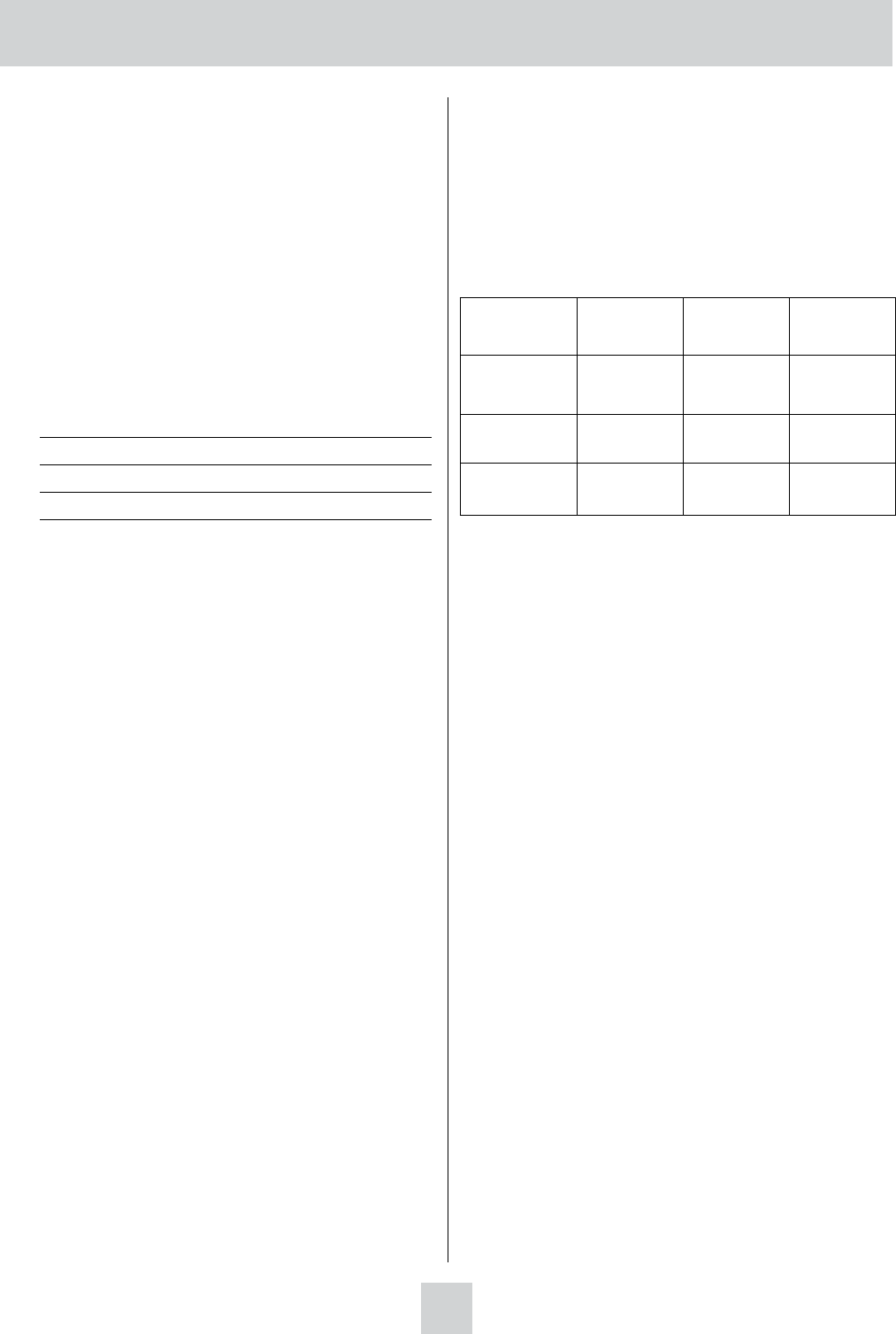

2014-15 National CLEP Candidates by Age*

These data are based on 100% of CLEP test-takers who responded to this

survey question during their examinations.

*

Under 18

11%

18-22 years

43%

23-29 years

22%

30 years and older

24%

2014-15 National CLEP Candidates by Gender

41%

59%

Computer-Based CLEP Testing

The computer-based format of CLEP exams allows

for a number of key features. These include:

• a variety of question formats that ensure effective

assessment

• real-time score reporting that gives students and

colleges the ability to make immediate credit-

granting decisions (except College Composition,

which requires faculty scoring of essays twice a

month)

• a uniform recommended credit-granting score of

50 for all exams

• “rights-only” scoring, which awards one point per

correct answer

• pretest questions that are not scored but provide

current candidate population data and allow for

rapid expansion of question pools

2

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

CLEP Exam Development

Content development for each of the CLEP exams

is directed by a test development committee. Each

committee is composed of faculty from a wide

variety of institutions who are currently teaching

the relevant college undergraduate courses. The

committee members establish the test specifications

based on feedback from a national curriculum

survey; recommend credit-granting scores and

standards; develop and select test questions; review

statistical data and prepare descriptive material for

use by faculty (Test Information Guides) and students

planning to take the tests (CLEP Official Study Guide).

College faculty also participate in CLEP in other

ways: they convene periodically as part of

standard-setting panels to determine the

recommended level of student competency for the

granting of college credit; they are called upon to

write exam questions and to review exam forms; and

they help to ensure the continuing relevance of the

CLEP examinations through the curriculum surveys.

The Curriculum Survey

The first step in the construction of a CLEP exam is

a curriculum survey. Its main purpose is to obtain

information needed to develop test-content

specifications that reflect the current college

curriculum and to recognize anticipated changes in

the field. The surveys of college faculty are

conducted in each subject every few years depending

on the discipline. Specifically, the survey gathers

information on:

• the major content and skill areas covered in the

equivalent course and the proportion of the course

devoted to each area

• specific topics taught and the emphasis given to

each topic

• specific skills students are expected to acquire and

the relative emphasis given to them

• recent and anticipated changes in course content,

skills and topics

• the primary textbooks and supplementary learning

resources used

• titles and lengths of college courses that

correspond to the CLEP exam

The Committee

The College Board appoints standing committees of

college faculty for each test title in the CLEP battery.

Committee members usually serve a term of up to

four years. Each committee works with content

specialists at Educational Testing Service to establish

test specifications and develop the tests. Listed

below are the current committee members and their

institutional affiliations.

Fred L. Miller, Murray State University

Chair

Janice M. Karlen City University of New York

La Guardia

DeAnna Kemp Middle Tennessee State

University

The primary objective of the committee is to produce

tests with good content validity. CLEP tests must be

rigorous and relevant to the discipline and the

appropriate courses. While the consensus of the

committee members is that this test has high content

validity for a typical introductory Principles of

Marketing course or curriculum, the validity of the

content for a specific course or curriculum is best

determined locally through careful review and

comparison of test content, with instructional content

covered in a particular course or curriculum.

The Committee Meeting

The exam is developed from a pool of questions

written by committee members and outside question

writers. All questions that will be scored on a CLEP

exam have been pretested; those that pass a rigorous

statistical analysis for content relevance, difficulty,

fairness and correlation with assessment criteria are

added to the pool. These questions are compiled by

test development specialists according to the test

specifications, and are presented to all the committee

members for a final review. Before convening at a

two- or three-day committee meeting, the members

have a chance to review the test specifications and

the pool of questions available for possible inclusion

in the exam.

3

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

At the meeting, the committee determines whether

the questions are appropriate for the test and, if not,

whether they need to be reworked and pretested

again to ensure that they are accurate and

unambiguous. Finally, draft forms of the exam are

reviewed to ensure comparable levels of difficulty and

content specifications on the various test forms. The

committee is also responsible for writing and

developing pretest questions. These questions are

administered to candidates who take the examination

and provide valuable statistical feedback on student

performance under operational conditions.

Once the questions are developed and pretested,

tests are assembled in one of two ways. In some

cases, test forms are assembled in their entirety.

These forms are of comparable difficulty and are

therefore interchangeable. More commonly,

questions are assembled into smaller,

content-specific units called testlets, which can then

be combined in different ways to create multiple test

forms. This method allows many different forms to

be assembled from a pool of questions.

Test Specifications

Test content specifications are determined primarily

through the curriculum survey, the expertise of the

committee and test development specialists, the

recommendations of appropriate councils and

conferences, textbook reviews and other appropriate

sources of information. Content specifications take

into account:

• the purpose of the test

• the intended test-taker population

• the titles and descriptions of courses the test is

designed to reflect

• the specific subject matter and abilities to be tested

• the length of the test, types of questions and

instructions to be used

Recommendation of the American

Council on Education (ACE)

The American Council on Education’s College

Credit Recommendation Service (ACE CREDIT)

has evaluated CLEP processes and procedures for

developing, administering and scoring the exams.

Effective July 2001, ACE recommended a uniform

credit-granting score of 50 across all subjects (with

additional Level-2 recommendations for the world

language examinations), representing the

performance of students who earn a grade of C in

the corresponding course. Every test title has a

minimum score of 20, a maximum score of 80 and a

cut score of 50. However, these score values cannot

be compared across exams. The score scale is set so

that a score of 50 represents the performance

expected of a typical C student, which may differ

from one subject to another. The score scale is not

based on actual performance of test-takers. It is

derived from the judgment of a panel of experts

(college faculty who teach the course) who provide

information on the level of student performance that

would be necessary to receive college credit in the

course.

Over

the years, the CLEP examinations have been

adapted to adjust to changes in curricula and

pedagogy. As academic disciplines evolve, college

faculty incorporate new methods and theory into

their courses. CLEP examinations are revised to

reflect those changes so the examinations continue to

meet the needs of colleges and students. The CLEP

program’s most recent ACE CREDIT review was

held in June 2015.

The American

Council on Education, the major

coordinating body for all the nation’s higher education

institutions, seeks to provide leadership and a unifying

voice on key higher education issues and to influence

public policy through advocacy, research and program

initiatives. For more information, visit the ACE

CREDIT website at www.acenet.edu/acecredit

.

4

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

CLEP Credit Granting

CLEP uses a common recommended credit-granting

score of 50 for all CLEP exams.

This common credit-granting score does not mean,

however, that the standards for all CLEP exams are

the same. When a new or revised version of a test is

introduced, the program conducts a standard setting

to determine the recommended credit-granting score

(“cut score”).

A standard-setting panel, consisting of 15–20 faculty

members from colleges and universities across the

country who are currently teaching the course, is

appointed to give its expert judgment on the level

of student performance that would be necessary to

receive college credit in the course. The panel

reviews the test and test specifications and defines

the capabilities of the typical A

student, as well as

those of the typical B, C and D students.

* Expected

individual student performance is rated by each

panelist on each question. The combined average of

the ratings is used to determine a recommended

number of examination questions that must be

answered correctly to mirror classroom performance

of typical B and C students in the related course.

The panel’s findings are given to members of the test

development committee who, with the help of

Educational Testing Service and College Board

psychometric specialists, make a final determination

on which raw scores are equivalent to B and C levels

of performance.

*Student performance for the language exams (French, German and Spanish)

is defined only at the B and C levels.

5

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

613

Principles of Marketing

Description of the Examination

The Principles of Marketing examination covers

material that is usually taught in one-semester

introductory courses in marketing. Candidates are

expected to have a basic knowledge of trends that

are important to marketing. Such a course is usually

known as Basic Marketing, Introduction to

Marketing, Fundamentals of Marketing, Marketing

or Marketing Principles. The exam is concerned

with the role of marketing in society and within a

firm, understanding consumer and organizational

markets, marketing strategy planning, the marketing

mix, marketing institutions, and other selected

topics, such as international marketing, ethics,

marketing research, services and not-for-profit

marketing. The candidate is also expected to have a

basic knowledge of the economic/demographic,

social/cultural, political/legal and technological

trends that are important to marketing.

The examination contains approximately

100 questions to be answered in 90 minutes.

Some of these are pretest questions that will not

be scored. Any time candidates spend on tutorials

and providing personal information is in addition

to the actual testing time.

Knowledge and Skills Required

The subject matter of the Principles of Marketing

examination is drawn from the following topics

in the approximate proportions indicated. The

percentages next to the main topics indicate the

approximate percentage of exam questions on

that topic.

8%–13% Role of Marketing in Society

Ethics

Nonprofit marketing

International marketing

17%–24% Role of Marketing in a Firm

Marketing concept

Marketing strategy

Marketing environment

Marketing decision system

• Marketing research

• Marketing information system

22%–27% Targ et Marketing

Consumer behavior

Segmentation

Positioning

Business-to-business markets

40%–50% Marketing Mix

Product and service management

Branding

Pricing policies

Distribution channels and logistics

Integrated marketing

communications/Promotion

Marketing application in e-commerce

6

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

7

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

614

Sample Test Questions

The following sample questions do not appear on

an actual CLEP examination. They are intended to

give potential test-takers an indication of the format

and difficulty level of the examination and to

provide content for practice and review. Knowing

the correct answers to all of the sample questions

is not a guarantee of satisfactory performance on

the exam.

Directions: Each of the questions or incomplete

statements below is followed by five suggested

answers or completions. Select the one that is best

in each case.

1. A manufacturer of car batteries, who has been

selling through an automotive parts wholesaler

to garages and service stations, decides to sell

directly to retailers. Which of the following will

necessarily occur?

(A) Elimination of the wholesaler’s profit will

result in a lower price to the ultimate

consumer.

(B) Elimination of the wholesaler’s marketing

functions will increase efficiency.

(C) The total cost of distribution will be reduced

because of the elimination of the wholesaler.

(D) The marketing functions performed by the

wholesaler will be eliminated.

(E) The wholesaler’s marketing functions will

be shifted to or shared by the manufacturer

and the retailer.

2. Which of the following strategies for entering

the international market would involve the

highest risk?

(A) Joint ventures

(B) Exporting

(C) Licensing

(D) Direct investment

(E) Franchising

3. For a United States manufacturer of major

consumer appliances, the most important

leading indicator for forecasting sales is

(A) automobile sales

(B) computer sales

(C) educational level of consumers

(D) housing starts

(E) number of business failures

4. Which of the following statements about the

European Union is true?

(A) The EU creates a single Pan-European

government.

(B) The

EU eliminates trade barriers among

member countries.

(C) The

EU is considered the United States of

Europe, with its capital in Brussels.

(D) The

EU removes all internal and external

trade barriers to global trade.

(E) The

EU minimizes inflation through price

controls.

5.

In contrast to a selling orientation, a marketing

orientation seeks to

(A) increase market share by emphasizing

promotion

(B) increase sales volume by lowering price

(C) lower the cost of distribution by direct

marketing

(D) satisfy the needs of targeted consumers

at a profit

(E) market products that make efficient use

of the firm’s resources

6. All of the following are characteristics of

services EXCEPT

(A) intangibility

(B) heterogeneity

(C) inseparability

(D) perishability

(E) inflexibility

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

8

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

615

7. A fertilizer manufacturer who traditionally

markets to farmers through farm supply dealers

and cooperatives decides to sell current products

to home gardeners through lawn and garden

shops. This decision is an example of

(A) market penetration

(B) market development

(C) product development

(D) diversification

(E) vertical integration

8. A manufacturer who refuses to sell to dealers its

popular line of office copiers unless the dealers

also agree to stock the manufacturer’s line of

paper products would most likely be guilty of

which of the following?

(A) Deceptive advertising

(B) Price discrimination

(C) Price fixing

(D) Reciprocity

(E) Tying contracts

9. Which of the following is an intermediary in the

distribution channel that moves goods without

taking title to them?

(A) Agent

(B) Wholesaler

(C) Merchant

(D) Retailer

(E) Dispenser

10. In which of the following situations is the

number of buying influences most likely to be

greatest?

(A) A university buys large quantities of paper

for computer printers on a regular basis.

(B) A computer manufacturer is building a new

headquarters and is trying to choose a line

of office furniture.

(C) A consumer decides to buy a different brand

of potato chips because they are on sale.

(D) A retail chain is searching for a vendor of

lower-priced cleaning supplies.

(E) A purchasing manager has been asked to

locate a second source of supply for

corrugated shipping cartons.

11. Which of the following best describes the

process of selecting target markets in order

to formulate a marketing mix?

(A) Strategic planning

(B) Product differentiation

(C) Market segmentation

(D) Marketing audit

(E)

SWOT analysis

12.

Cooperative advertising is usually undertaken by

manufacturers in order to

(A) secure the help of the retailer in promoting a

given product

(B) divide responsibilities between the retailers

and wholesalers within a channel of

distribution

(C) satisfy legal requirements

(D) create a favorable image of a particular

industry in the minds of consumers

(E) provide a subsidy for smaller retailers that

enables them to match the prices set by

chain stores

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

9

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

616

13. A marketer usually offers a noncumulative

quantity discount in order to

(A) reward customers for repeat purchases

(B) reduce advertising expenses

(C) encourage users to purchase in large

quantities

(D) encourage buyers to submit payment

promptly

(E) ensure the prompt movement of goods

through the channel of distribution

14. Which of the following statements about

secondary data is correct?

(A) Secondary data are usually more expensive

to obtain than primary data.

(B) Secondary data are usually available in a

shorter period of time than primary data.

(C) Secondary data are usually more relevant to

a research objective than are primary data.

(D) Secondary data must be collected outside

the firm to maintain objectivity.

(E) Previously collected data are not secondary

data.

15. Missionary salespersons are most likely to do

which of the following?

(A) Sell cosmetics directly to consumers in their

own homes

(B) Take orders for air conditioners to be used

in a large institution

(C) Describe drugs and other medical supplies

to physicians

(D) Secure government approval to sell heavy

machinery to a foreign government

(E) Take orders for custom-tailored garments or

other specially produced items

16. The demand for industrial goods is sometimes

called “derived” because it depends on

(A) economic conditions

(B) demand for consumer goods

(C) governmental activity

(D) availability of labor and materials

(E) the desire to make a profit

17. Behavioral research generally indicates that

consumers’ attitudes

(A) do not change very easily or quickly

(B) are very easy to change through promotion

(C) cannot ever be changed

(D) can only be developed through actual

experience with products

(E) are very accurate predictors of actual

purchasing behavior

18. A channel of distribution refers to the

(A) routing of goods through distribution

centers

(B) sequence of marketing intermediaries from

producer to consumer

(C) methods of transporting goods from

producer to consumer

(D) suppliers who perform a variety of functions

(E) traditional handlers of a product line

19. A major advantage of distributing products by

truck is

(A) low cost relative to rail or water

(B) low probability of loss or damage to cargo

(C) accessibility to pick-up and delivery

locations

(D) speed relative to rail or air

(E) ability to handle a wider variety of products

than other means

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

10

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

617

20. If a firm is using penetration pricing, the firm is

most likely trying to achieve which of the

following pricing objectives?

(A) Product quality leadership

(B) Market-share maximization

(C) High gross margin

(D) Status quo

(E) Geographic flexibility

21. If a company decides to allocate more resources

to personal selling and sales promotion by its

resellers, which of the following strategies is it

using?

(A) Pull strategy

(B) Push strategy

(C) Direct selling strategy

(D) Indirect selling strategy

(E) Integrated marketing communication

22. Marketing strategy planning includes

(A) supervising the activities of the firm’s

sales force

(B) determining the most efficient way to

manufacture products

(C) selecting a target market and developing the

marketing mix

(D) determining the reach and frequency of

advertising

(E) monitoring sales in response to a price

change

23. A brand that has achieved brand insistence and

is considered a specialty good by the target

market suggests which of the following

distribution objectives?

(A) Widespread distribution near probable

points of use

(B) Exclusive distribution

(C) Intensive distribution

(D) Enough exposure to facilitate price

comparison

(E) Widespread distribution at low cost

24. Market segmentation that is concerned with

people over 65 years of age is called

(A) geographic

(B) socioeconomic

(C) demographic

(D) psychographic

(E) behavioral

25. The XYZ Corporation has two chains of

restaurants. One restaurant specializes in family

dining with affordable meals. The second

restaurant targets young, single individuals, and

offers a full bar and small servings. The XYZ

Corporation uses which form of targeted

marketing strategy?

(A) Mass

marketing

(B) Differentiated marketing

(C) Undifferentiated marketing

(D) Customized marketing

(E) Concentrated marketing

26. The marketing director of a manufacturing

company says, “If my wholesaler exceeds the

sales record from last month, I agree to give him

a paid trip to the Bahamas.” This technique is a

form of

(A) sales promotion

(B) advertising

(C) personal selling

(D) direct marketing

(E) public relations

27. Using a combination of different modes of

transportation to move freight in order to exploit

the best features of each mode is called

(A) conventional distribution

(B) developing dual distribution

(C) selective distribution

(D) intermodal transportation

(E) freight forwarding

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

11

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

618

28. Which of the following is a major disadvantage

associated with the use of dual distribution?

(A) It is usually very expensive.

(B) It can cause channel conflict.

(C) It provides limited market coverage.

(D) It is only appropriate for corporate channels.

(E) Some distribution channel functions are not

completed.

29. The estimated market value of a brand is best

described as brand

(A) equity

(B) benefit

(C) worth

(D) merit

(E) return on investment

30. Which of the following would be considered a

nonprofit organization?

(A) A homeless shelter that charges a fee for its

services and uses the proceeds for the

upkeep of the shelter

(B) A drug rehabilitation center in which

revenues in excess of cost go to the owners

(C) A vaccination clinic owned by an individual

entrepreneur

(D) A bookstore open to the public for business

(E) A hospital that has a publicly traded

common stock

31. Which of the following approaches for entering

international markets involves granting the rights

to a patent, trademark, or manufacturing process

to a foreign company?

(A) Exporting

(B) Franchising

(C) Licensing

(D) Joint venturing

(E) Contract manufacturing

32. Reference groups are more likely to influence a

consumer’s purchase when the product being

purchased is

(A) important

(B) inexpensive

(C) familiar

(D) intangible

(E) socially visible

33. Which of the following is true of the product

life cycle?

(A) It can accurately forecast the growth of new

products.

(B) It reveals that branded products have the

longest growth phase.

(C) It cannot be applied to computer products

that quickly become obsolete.

(D) It is based on the assumption that products

go through distinct stages in sales and profit

performance.

(E) It proves that profitability is highest in the

mature phase.

34. The primary purpose of market segmentation

is to

(A) combine different groups to meet their needs

(B) create sales territories of similar size and

market potential to determine sales quotas

(C) reduce market demand to a manageable size

(D) profile the market as a whole to optimize

marketing efforts

(E) allocate marketing resources to meet the

needs of specific segments

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

12

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

35. A marketing expert said that he could have

advertised a brand of soap as a detergent bar

for men with dirty hands, but instead chose to

advertise it as a moisturizing bar for women with

dry skin. This illustrates the marketing principle

known as

(A) product positioning

(B) sales promotion

(C) cannibalization

(D) deceptive advertising

(E) undifferentiated marketing

36. The process of identifying people or companies

who may have a need for a salesperson’s product

is known as

(A) cold calling

(B) presenting

(C) approaching

(D) prospecting

(E) targeting

37. Which of the following is a primary

disadvantage of direct marketing?

(A) It is difficult to measure response.

(B) It is not personal.

(C) It is poorly targeted.

(D) It tends to have high costs per contact.

(E) It has a fragmented audience.

38. A formal statement of standards that governs

professional conduct is called a

(A) customer bill of rights

(B) business mission statement

(C) corporate culture

(D) code of ethics

(E) caveat emptor

39. Maxine suddenly realizes that she is out of paper

towels. She remembers that she last bought

Max Dri Towels, so she stops at the store and

picks up another roll of Max Dri on her way

home from work. In this example, Maxine uses

what form of information search in her decision

process?

(A) Limited problem solving

(B) Extended problem solving

(C) Internal information search

(D) Compensatory information search

(E) Information search by personal sources

40. The ability to tailor marketing processes to fit

the specific needs of an individual customer is

called

(A) customization

(B) community building

(C) standardization

(D) mediation

(E) product differentiation

41. Which of the following is true of price

skimming?

(A) It requires intermediaries to provide

kickback payments.

(B) It calls for relatively high prices to start,

reducing over time.

(C) It is reserved for products in the late stages

of the product life cycle.

(D) It works best in situations with highly elastic

demand.

(E) It is illegal in most jurisdictions in the

United States.

42.

ABC Company agrees to pay a certain amount

of a retailer’s promotional costs for advertising

ABC’s products. This is an example of

(A) cooperati

ve advertising

(B) reminder advertising

(C) comparison advertising

(D) slotting allowance

(E) a premium

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

13

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

620

43. To save time and money, a marketing research

team uses data that have already been gathered

for some other purpose. Which type of data is

the team using?

(A) Sample

(B) Primary

(C) Secondary

(D) Survey

(E) Experiment

44. Positioning refers to

(A) the perception of a product in customers’

minds

(B) the store location in which a marketing

manager suggests a product be displayed

(C) where the product is placed on the shelf

(D) which stores will distribute a company’s

product

(E) where the new product is first advertised

45. Which of the following best describes members

of a buying center?

(A) Everyone involved in making the purchase

decision

(B) Everyone involved in signing contracts

(C) Everyone employed in the purchasing

department

(D) Direct reports of the vice president of

purchasing

(E) Technical experts who advise on product

specifications

46. Which of the following most accurately

describes agent wholesalers?

(A) They take title to goods that they sell to

other intermediaries.

(B) They do not take title to goods that they sell

to other intermediaries.

(C) They take title to goods that they sell to final

consumers.

(D) They do not take title to the goods that they

sell on commission to final consumers.

(E) They manufacture the goods that they sell to

final consumers.

47. Which of the following are key characteristics of

services?

(A) Cost, time, quality, and value

(B) Uncertainty, variability, and standardization

(C) Intangibility, durability, and standardization

(D) Intangibility, perishability, and variability

(E) Tangibility, variability, and uncertainty

48. The growth of service industries is primarily the

result of

(A) increasingly complex and specialized

customer needs

(B) a rise in income and the degree of customer

input

(C) rapid growth of population and government

tracking systems

(D) increased use of labor-intensive technology

(E) decreasing demand for equipment-based

services

49. Which of the following is the fastest growing

nonstore retail segment in the United States?

(A) Television home shopping

(B) Automatic vending

(C) Online retailing

(D) Catalog marketing

(E) Direct-response marketing

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

14

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

621

50. While writing a marketing plan, Melanie

decides that the marketing objective should

be “to increase market share by 5 percent.”

A weakness in this objective is that

(A) it is not measurable

(B) it should be related to brand image rather

than market share

(C) it does not specify a time period

(D) if the product is an industrial product, the

objective should specify product quality

(E) it does not address all elements of the

business plan

51. Which of the following is the first step in the

sales process?

(A) Prospecting

(B) Sales presentation

(C) Gaining commitment

(D) Approach

(E) Precall planning

52. Which of the following is an example of

business-to-business buying?

(A) John buys a new home stereo.

(B) Hannah pays for a new television by

monthly installment.

(C) Daniel decides on which college to attend.

(D) Avery purchases a new office desk for

his company.

(E) Corey buys a soft drink from a vending

machine.

53. President John F. Kennedy’s assertion that

consumers have certain rights led to legislation

that guaranteed all of the following EXCEPT

the right to

(A) be informed

(B) be heard

(C) choose

(D) bargain

(E) safety

54. Harmony Households is the largest home

improvement retailer in China. Because of its

size and market power, the firm can insist upon

desired product features, delivery schedules, and

price points from its suppliers. Harmony

Households is

(A) a primary intermediary

(B) an agent retailer

(C) a multilevel distributor

(D) a channel captain

(E) a dominant distributor

55. Which of the following is NOT a segmentation

criterion or consideration used for choosing a

target market?

(A) Market accountability

(B) Market identifiability and measurability

(C) Market substantiality

(D) Market accessibility

(E) Market responsiveness

56. Rachel Terry, regional manager at Wilcon

Solvents, Inc., compares last quarter’s sales with

the levels projected in the firm’s marketing plan.

She identifies three solvent brands whose sales

are below projections and initiates a series of

inquiries to discover the reasons for the shortfall.

Ms. Terry is engaged in which stage of the

strategic marketing process?

(A) Environmental scanning

(B) Opportunity analysis

(C) Planning

(D) Implementation

(E) Control

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

15

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

622

57. Richard Weiss, SA, is a Swiss watch

manufacturer. One of its two major brands

offers ruggedness, reliability and durability

to active sports enthusiasts. The other offers

elegance and stylishness to fashion conscious

consumers. Which of the following segmentation

approaches is this firm using?

(A) Demographic

(B) Geographic

(C) Usage

(D) Benefits sought

(E) Socioeconomic

58. Compared with agent intermediaries, merchant

intermediaries

(A) sell only to organizational customers

(B) sell only in export markets

(C) are employees of the manufacturer

(D) are compensated by a commission on sales

(E) take title to the goods they sell

59. Gabriella’s is an Italian producer of fashion

jeans. In this highly competitive market, the

firm wishes to match the advertising efforts of

its competitors by achieving a share of voice

(promotion) which is roughly equal to its share

of market (sales). This approach to promotional

budgeting is called

(A) all you can afford

(B) percent of sales

(C) allocation per unit

(D) competitive parity

(E) objective and task

60.

On

its e-commerce Web site,

XYZMusic.com

sells

songs from new artists who produce their

own music. The site also hosts ads from other

e-businesses. The ads presented to a user depend

on the type of music the user is reviewing.

XYZMusic.com collects

ad revenue when users

follow links in the ads to the advertisers’ Web

sites. XYZMusic.com’s advertising revenue is

based on

(A) cost-per

-thousand exposures

(B) click-through rates

(C) ad presentation rates

(D) unique site-visitor data

(E) site-traffic data

61. Which of the following organizations

administers the GATT (General Agreement

on Tariffs and Trade) ?

(A) The

United Nations

(B) The European Union

(C) The World Trade Organization

(D) The North American Free Trade Agreement

(E) The Securities and Exchange Commission

62. At the most basic level, products and services

should be viewed as a collection of

(A) attributes

(B) expectations

(C) benefits

(D) features

(E) promises

63. The primary function of promotion is to

(A) sell products and services

(B) create awareness

(C) inform, persuade, and remind

(D) make a demand more elastic

(E) eliminate competition

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

16

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

64. A marketing strategy is composed of both

(A) a target market and market opportunities

(B) a target market and related marketing mix

(C) a target market and

SW

OT analysis

(D) a

marketing mix and required resources

(E) a marketing mix and competition

65. Which of the following is NOT an advantage of

Internet advertising as compared with traditional

advertising?

(A) Target-market selectivity

(B) Tracking ability

(C) Exclusivity

(D) Deliverability

(E) Interactivity

66. Which of the following statements most

accurately describes antidumping laws?

(A) They set the price that foreign producers

must charge.

(B) They control the maximum price of

imported products.

(C) They prevent foreign producers from

competing on the basis of price.

(D) They prevent foreign-manufactured goods

from selling at below cost.

(E) They protect consumers from cheaply

manufactured foreign products.

67. In selling a new global logistics information

system to a large client, the national account

manager of a leading supply chain management

vendor learns that client executives from

marketing, production, human resources,

finance, and business strategy will participate in

the decision-making process. Which of the

following terms best describes the scope of the

buying initiative?

(A) Universal

(B) Specialized

(C) Standardized

(D) Cross-functional

(E) Decentralized

68. Which of the following lists the correct sequence

of steps in the consumer decision-making

process?

(A) Need recognition, evaluation, purchase

decision, invoked set, postpurchase behavior

(B) Felt need, response to stimulus, evaluation

of alternatives, postpurchase decision,

purchase behavior

(C) Alternative invoked set, need recognition,

purchase decision, postpurchase evaluation

(D) Information search, need positioning,

evaluation of alternatives, product purchase

decision, postpurchase satisfaction

(E) Need recognition, information search,

evaluation of alternatives, purchase,

postpurchase behavior

69. Consumers tend to be more satisfied with their

purchase of a product when

(A) cognitive dissonance develops after the

purchase

(B) the price of the product falls after the

purchase

(C) they research the product before the

purchase

(D) their opinions are inconsistent with their

values

(E) there is no further contact with the seller

70. Which of the following is true of global

marketing standardization?

(A) It occurs more frequently with consumer

products than with industrial goods.

(B) It encourages individualized variation in the

product, packaging, and pricing for each

nation or local market.

(C) It addresses legal and cultural differences.

(D) It assumes that global consumers

increasingly have similar needs.

(E) It reduces profit margins.

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

17

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

624

71. The three types of marketing research are

(A) explanatory, normative, and descriptive

(B) predictive, normative, and innovative

(C) interactive, diagnostic, and predictive

(D) proactive, interactive, and reactive

(E) exploratory, descriptive, and causal

72. A product is classified as a business product

rather than a consumer product based on its

(A) tangible and intangible attributes

(B) life-cycle position

(C) promotion type

(D) pricing strategy

(E) intended use

73. Which one of the following changes would most

likely motivate a firm to reposition a brand?

(A) Shifting demographics

(B) Stock market fluctuations

(C) An economic downturn

(D) Changes in available financial resources

(E) Rising sales

74. An advertisement for prospective applicants to a

college shows individual students along with the

slogan “I am getting ready to seize my destiny.”

The ad appeals to which need in Maslow’s

hierarchy?

(A) Physiological

(B) Esteem

(C) Social affiliation

(D) Self-actualization

(E) Safety

75. If two brands move closer to each other on a

perceptual (positioning) map, it means that they

have become

(A) less perceptually alike

(B) closer in price

(C) more objectively alike

(D) less likely to be direct competitors

(E) more similarly perceived by consumers

76. Lutèce Brands, Inc., acknowledges that its

French pastries may contribute to health

problems in some of its customers, but the

company claims that the benefits (such as

personal pleasure and taste) outweigh the risks.

The company’s approach to moral reasoning is

best described as

(A) moral idealism

(B) utilitarianism

(C) categorical imperative

(D) enlightened self-interest

(E) situational ethics

77. Which of the following lists the correct order of

the steps of the target marketing process?

(A) Segmentation, positioning, and targeting

(B) Targeting, segmentation, and positioning

(C) Positioning, targeting, and segmentation

(D) Segmentation, targeting, and positioning

(E) Positioning, segmentation, and targeting

78. Which of the following types of marketing

communications tends to have the shortest-term

focus and objectives?

(A) Brand advertising

(B) Public relations

(C) Sales promotion

(D) Event sponsorship

(E) Corporate advertising

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

18

PRINCIPLES OF MARKETING

PRINCIPLES OF MARKETING

625

Directions: Select a choice and click on the blank in

which you want the choice to appear. Repeat until all

of the blanks have been filled. A correct answer must

have a different choice in each blank.

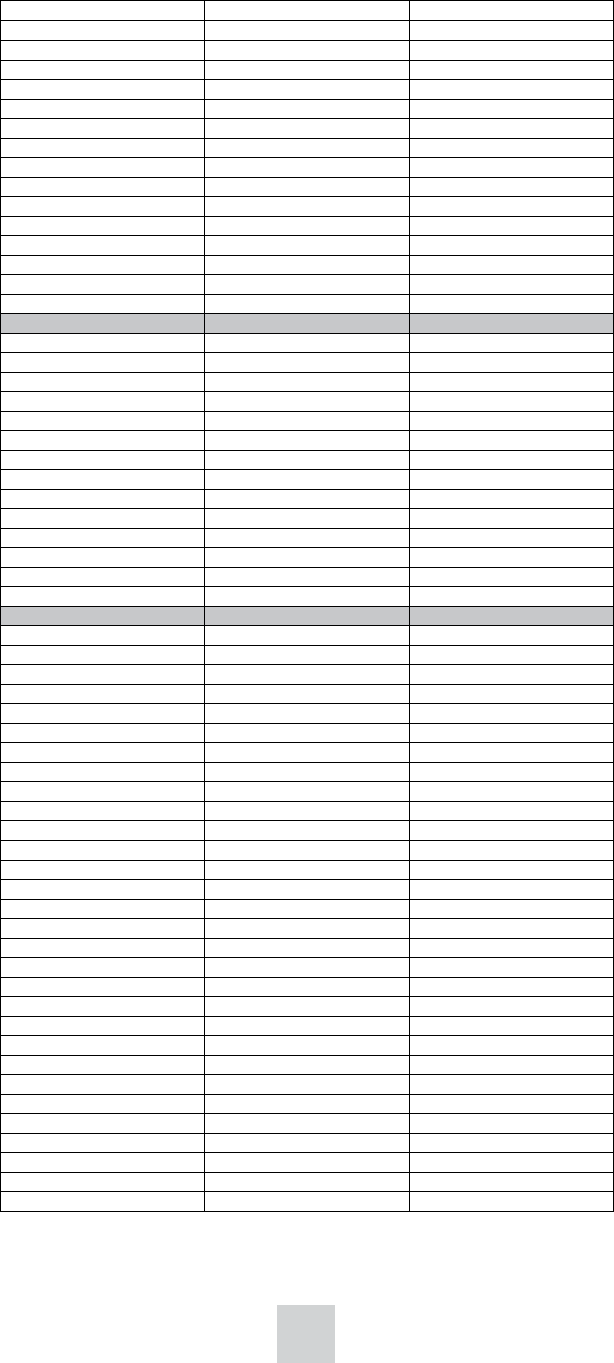

79. Place the four steps in the marketing research

process in the correct order.

Determine the research design.

Define the problem.

Collect data.

Choose the data collection method.

Directions: Choose among the corresponding

environments in the columns for each entry by

clicking on your choice. When you click on a blank

cell a check mark will appear. No credit is given

unless the correct cell is marked for each entry.

80. For each of the following events, indicate which

kind of environment it belongs to.

Sociocultural

Environment

Economic

Environment

Technological

Environment

The development

of new production

techniques

The growing

Latino population

Rising mortgage

rates

PRINCIPLES OF MARKETING

626

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

PRINCIPLES OF MARKETING

Study Resources

Most textbooks used in college-level principles of

marketing courses cover the topics in the outline

given earlier, but the approaches to certain topics

and the emphases given to them may differ. To

prepare for the Principles of Marketing exam, it is

advisable to study one or more college textbooks,

which can be found in most college bookstores.

When selecting a textbook, check the table of

contents against the knowledge and skills required

for this test. Please note that textbooks are updated

frequently; it is important to use the latest editions

of the textbooks you choose. Most textbooks now

have study guides, computer applications and

case studies to accompany them. These learning

aids could prove useful in the understanding and

application of marketing concepts and principles.

You can broaden your understanding of marketing

principles and their applications by keeping abreast

of current developments in the field from articles

in newspapers and news magazines as well as in

business publications such as The Wall Street

Journal, Business Week, Harvard Business Review,

Fortune, Ad Week and Advertising Age. Journals

found in most college libraries that will help you

expand your knowledge of marketing principles

include Journal of Marketing, Marketing Today,

Journal of the Academy of Marketing Sciences,

American Demographics and Marketing Week.

Books of readings, such as Annual Editions

— Marketing, also are sources of current thinking.

Visit clep.collegeboard.org/test-preparation for

additional marketing resources. You can also find

suggestions for exam preparation in Chapter IV of

the Official

Study Guide. In addition, many college

faculty post their course materials on their schools’

websites.

Answer Key

1. E

D

D

B

D

E

B

E

A

B

C

A

C

B

C

B

A

B

C

B

B

C

B

C

B

A

D

28.

2. 29.

3. 30.

4. 31.

5. 32.

6. 33.

7. 34.

8. 35.

9. 36.

10. 37.

11. 38.

12. 39.

13. 40.

14. 41.

15. 42.

16. 43.

17. 44.

18. 45.

19. 46.

20. 47.

21. 48.

22. 49.

23. 50.

24. 51.

25. 52.

26. 53.

27. 54.

79. Define the problem.

B

A

A

C

E

D

E

A

D

D

D

C

A

B

A

C

A

A

B

D

A

C

C

A

D

D

D

55. A

56. E

57. D

58. E

59. D

60. B

61. C

62. C

63. C

64. B

65. C

66. D

67. D

68. E

69. C

70. D

71. E

72. E

73. A

74. D

75. E

76. B

77. D

78. C

79. See below

80. See below

Determine the research design.

Choose the data collection method.

Collect data.

80.

Sociocultural

Environment

Economic

Environment

Technological

Environment

The development

of new production

techniques

The growing

Latino population

Rising mortgage

rates

19

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

20

PRINCIPLES OF MARKETING

Test Measurement Overview

Format

There are multiple forms of the computer-based test,

each containing a predetermined set of scored

questions. The examinations are not adaptive. There

may be some overlap between different forms of a

test: any of the forms may have a few questions,

many questions, or no questions in common. Some

overlap may be necessary for statistical reasons.

In the computer-based test, not all questions

contribute to the candidate’s score. Some of the

questions presented to the candidate are being

pretested for use in future editions of the tests and

will not count toward his or her score.

Scoring Information

CLEP examinations are scored without a penalty for

incorrect guessing. The candidate’s raw score is

simply the number of questions answered correctly.

However, this raw score is not reported; the raw

scores are translated into a scaled score by a process

that adjusts for differences in the difficulty of the

questions on the various forms of the test.

Scaled Scores

The scaled scores are reported on a scale of 20–80.

Because the different forms of the tests are not

always exactly equal in difficulty, raw-to-scale

conversions may in some cases differ from form to

form. The easier a form is judged to be, the higher

the raw score required to attain a given scaled score.

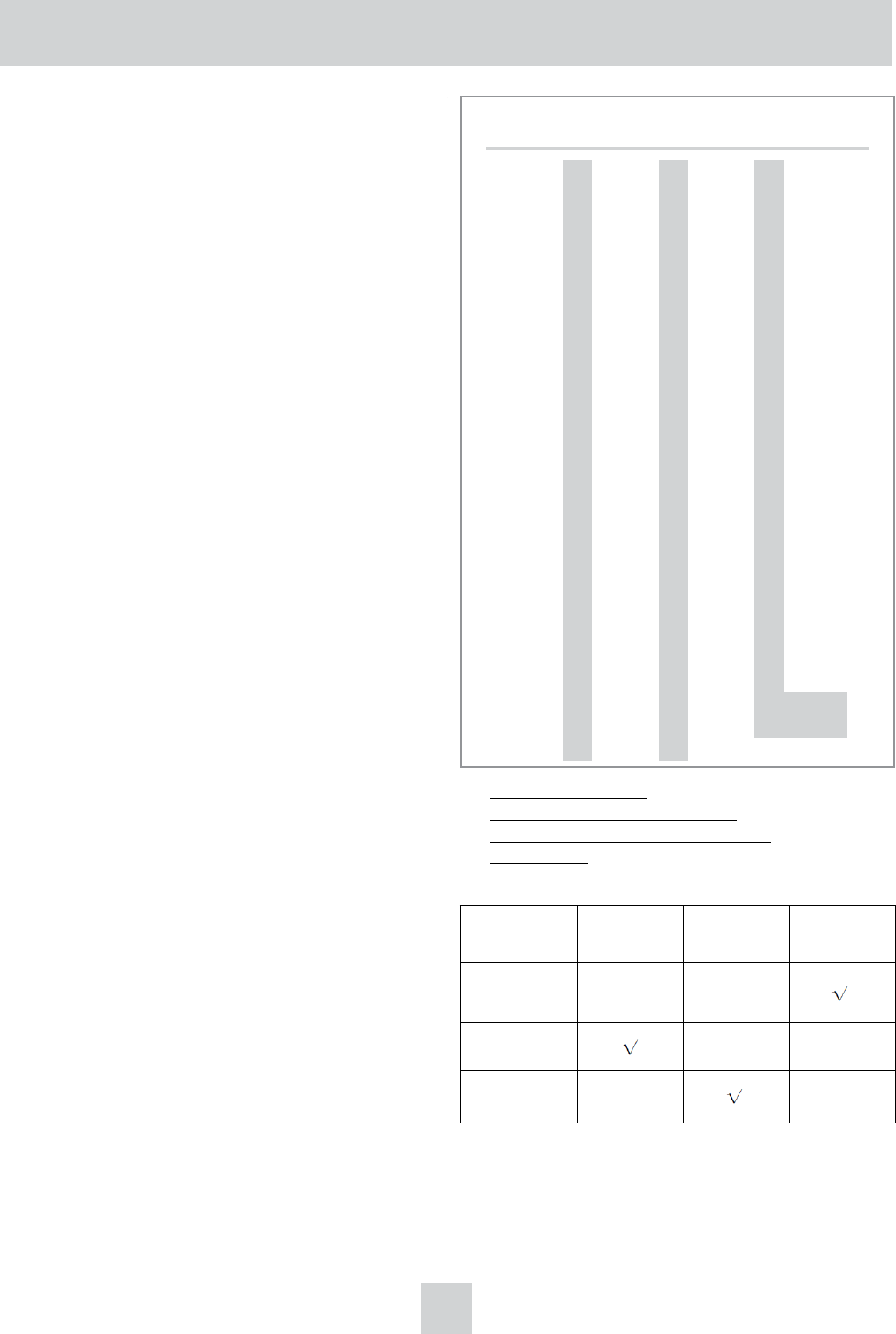

Table 1 indicates the relationship between number

correct (raw score) and scaled score across all forms.

The Recommended Credit-Granting

Score

Table 1 also indicates the recommended

credit-granting score, which represents the

performance of students earning a grade of C in the

corresponding course. The recommended B-level

score represents B-level performance in equivalent

course work. These scores were established as the

result of a Standard Setting Study, the most recent

having been conducted in 2007. The recommended

credit-granting scores are based upon the judgments

of a panel of experts currently teaching equivalent

courses at various colleges and universities. These

experts evaluate each question in order to determine

the raw scores that would correspond to B and C levels

of performance. Their judgments are then reviewed by

a test development committee, which, in consultation

with test content and psychometric specialists, makes a

final determination. The standard-setting study is

described more fully in the earlier section entitled

“CLEP Credit Granting” on page 5.

Panel members participating in the most recent study

were:

Richard Dailey University of Texas at Arlington

Laura Earner Saint Xavier University

Nathan Himelstein Essex County College

DeAnna Kempf Middle Tennessee State

University

Michael Levas Carroll College

Erika Matulich University of Tampa

Brenda McAleer University of Maine at Augusta

Sunder Narayanan New York University —

Stern School of Business

Pookie Sautter New Mexico State University

Melinda Schmitz Pamlico Community College

Susan Sieloff Northeastern University

Ian Sinapuelas Washington State University

Carl Sonntag Pikes Peak Community College

Gary White Bucks County Community

College

Mark Young Winona State University

Martha Zenns Jamestown Community College

After the recommended credit-granting scores are

determined, a statistical procedure called scaling is

applied to establish the exact correspondences

between raw and scaled scores. Note that a scaled

score of 50 is assigned to the raw score that

corresponds to the recommended credit-granting

score for C-level performance, and a high but usually

less-than-perfect raw score is selected and assigned a

scaled score of 80.

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

Table 1: Principles of Marketing

Interpretive Score Data

American Council on Education (ACE) Recommended Number of Semester Hours of Credit: 3

Course Grade Scaled Score Number Correct

80 80

79 79

78 77-78

77 76-77

76 75-76

75 74

74 73

73 71-72

72 70-71

71 69-70

70 68-69

69 67-68

68 66-67

67 65-66

66 63-64

B 65 62-63

64 61-62

63 60-61

62 59-60

61 58-59

60 57-58

59 56-57

58 55-56

57 54-55

56 53

55 52

54 50-51

53 49-50

52 48-49

51 47-48

C 50* 46-47

49 45-46

48 44-45

47 43-44

46 42-43

45 41

44 40

43 39

42 38

41 37

40 36

39 34-35

38 33-34

37 32-33

36 31-32

35 30-31

34 29-30

33 28-29

32 27

31 26

30 25

29 23-24

28 22-23

27 21-22

26 20-21

25 19-20

24 18-19

23 17-18

22 16-17

21 15-16

20 0-15

*Credit-granting score recommended by ACE.

Note: The number-correct scores for each scaled score on different forms may vary depending on form difculty.

21

CLEP TIG - Principles of Marketing • XPP • 79995-007740 • Dr01 8/12/09 ta • Dr02 9/3/09 ta • Preight 9/18/09 • New Job 90336-007740 Dr01 9/19/11 ta • Preight 10/11/11 ta • New Job 96636-

007740 Dr01 11/13/12 ta • Prelight 11/26/12 ta • NEW 100395 dr01 080613 iy • dr02 090413 iy • prf 091313 iy • NEW 105042-007740 Dr01 8/13/14 ta • Dr02 8/9/14 ta • preight 091214 ljg • New job

109579-007740 • Dr01 8/25/15 ta

22

PRINCIPLES OF MARKETING

Validity

Validity is a characteristic of a particular use of the

test scores of a group of examinees. If the scores are

used to make inferences about the examinees’

knowledge of a particular subject, the validity of the

scores for that purpose is the extent to which those

inferences can be trusted to be accurate.

One type of evidence for the validity of test scores is

called content-related evidence of validity. It is

usually based upon the judgments of a set of experts

who evaluate the extent to which the content of the

test is appropriate for the inferences to be made

about the examinees’ knowledge. The committee

that developed the CLEP Principles of Marketing

examination selected the content of the test to reflect

the content of Principles of Marketing courses at

most colleges, as determined by a curriculum survey.

Since colleges differ somewhat in the content of the

courses they offer, faculty members should, and are

urged to, review the content outline and the sample

questions to ensure that the test covers core content

appropriate to the courses at their college.

Another type of evidence for test-score validity is

called

criterion-related evidence of validity. It

consists of statistical evidence that examinees who

score high on the test also do well on other measures

of the knowledge or skills the test is being used to

measure. Criterion-related evidence for the validity

of CLEP scores can be obtained by studies

comparing students’ CLEP scores with the grades

they received in corresponding classes, or other

measures of achievement or ability. CLEP and the

College Board conduct these studies, called

Admitted Class Evaluation Service or ACES, for

individual colleges that meet certain criteria at the

college’s request. Please contact CLEP for more

information.

Reliability

The reliability of the test scores of a group of

examinees is commonly described by two statistics:

the reliability coefficient and the standard error of

measurement (SEM). The reliability coefficient is

the correlation between the scores those examinees

get (or would get) on two independent replications

of the measurement process. The reliability

coefficient is intended to indicate the

stability/consistency of the candidates’ test scores,

and is often expressed as a number ranging from

.00 to 1.00. A value of .00 indicates total lack of

stability, while a value of 1.00 indicates perfect

stability. The reliability coefficient can be interpreted

as the correlation between the scores examinees

would earn on two forms of the test that had no

questions in common.

Statisticians use an internal-consistency measure to

calculate

the reliability coefficients for the CLEP

exam.

1

This involves looking at the statistical

relationships among responses to individual

multiple-choice questions to estimate the reliability

of the total test score. The SEM is an estimate of the

amount by which a typical test-taker’s score differs

from the average of the scores that a test-taker would

have gotten on all possible editions of the test. It is

expressed in score units of the test. Intervals

extending one standard error above and below the

true score for a test-taker will include 68 percent of

that test-taker’s obtained scores. Similarly, intervals

extending two standard errors above and below the

true score will include 95 percent of the test-taker’s

obtained scores. The standard error of measurement

is inversely related to the reliability coefficient. If the

reliability of the test were 1.00 (if it perfectly

measured the candidate’s knowledge), the standard

error of measurement would be zero.

An additional index of reliability is the conditional

standard of error of measurement (CSEM). Since

different editions of this exam contain different

questions, a test-taker’s score would not be exactly

the same on all possible editions of the exam. The

CSEM indicates how much those scores would vary.

It is the typical distance of those scores (all for the

same test-taker) from their average. A test-taker’s

CSEM on a test cannot be computed, but by using

the data from many test-takers, it can be estimated.

The CSEM estimate reported here is for a test-taker

whose average score, over all possible forms of the

exam, would be equal to the recommended C-level

credit-granting score.

Scores on the CLEP examination in Principles of

Marketing are estimated to have a reliability

coefficient of 0.89. The standard error of measurement

is 3.38 scaled-score points. The conditional standard

error of measurement at the recommended C-level

credit-granting score is 3.74 scaled-score points.

1

The formula used is known as Kuder-Richardson 20, or KR-20, which is equivalent

to a more general formula called coefficient alpha.

109579 - 007740 • UNLWEB915